Runway ML has announced its latest generative AI video model, Gen-3 Alpha, which allows users to create ultra-realistic, high-quality 10-second scenes using text prompts, still images, or pre-recorded videos. Runway’s CTO, Anastasis Germanidis, discussed the model’s advancements, such as enhanced camera and object motion controls, and a modular framework allowing for quicker tool integration. Gen-3 Alpha promises greater geometric consistency and prompt adherence, setting it apart from its predecessors and other market competitors. The rollout plan includes a phased release, starting with paid users. The model has already garnered interest from professional filmmakers and media companies for its potential to streamline creative processes. However, Runway emphasizes that while AI can speed up iteration and bring unique ideas to life, traditional filmmaking techniques will still play a significant role. The company foresees a collaborative future where AI tools complement human creativity rather than replace it entirely.

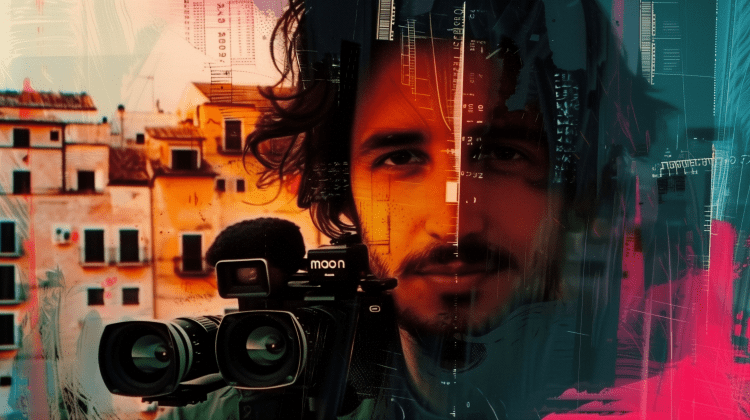

Meet Gen-3 Alpha – Runway ML’s Leap in Generative AI for Video Creation

Runway ML’s Gen-3 Alpha sets a new standard in generative AI video modeling with advanced features and a phased rollout.

1–2 minutes