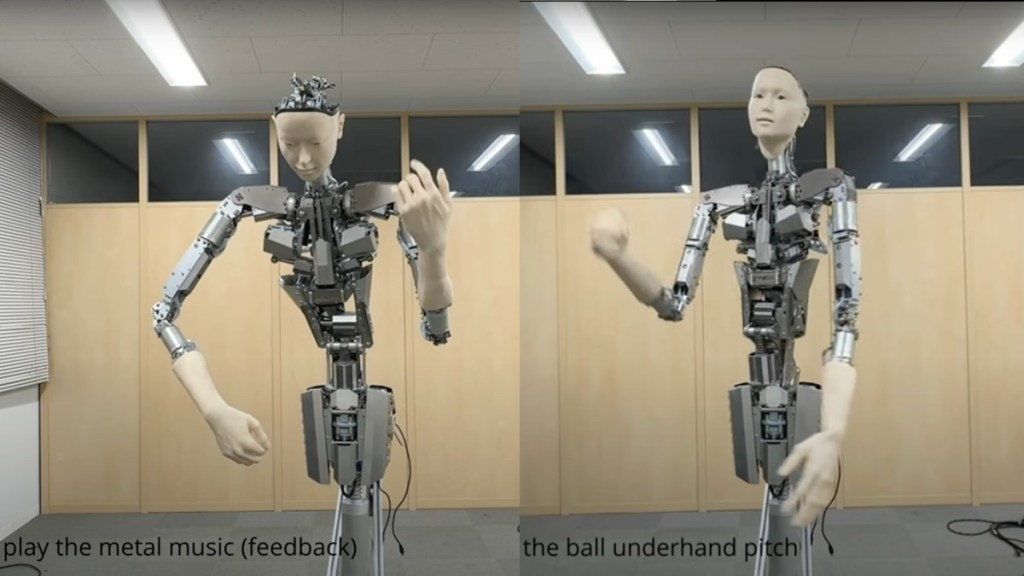

The University of Tokyo and Alternative Machine have developed a humanoid robot system, Alter3, that can directly map natural language commands to robot actions using large language models like GPT-4. This innovative system brings together the power of foundation models and robotics, allowing Alter3 to perform complicated tasks such as taking a selfie or pretending to be a ghost. The researchers used GPT-4 as the backend model, which receives natural language instructions and plans a series of actions for the robot to achieve its goal. The model then generates commands required for the robot to perform each step, using its in-context learning ability to adapt to the robot’s API. The system also allows for human feedback and corrections, refining the action sequence and storing it for future use. This breakthrough has significant implications for robotics research, as it demonstrates the potential for using off-the-shelf foundation models as reasoning and planning modules in robotics control systems. However, it also highlights the need to address the base challenges of creating robots that can perform primitive tasks, such as grasping objects and maintaining balance.

Robotics Revolution

Alter3 uses GPT-4 as the backend model to plan a series of actions that the robot must take to achieve its goal.

1–2 minutes