OpenAI is taking significant steps to address coding errors in ChatGPT-generated code with the introduction of CriticGPT. Since its release, ChatGPT has garnered praise for its ability to generate code in languages like Python and Ruby, but it has also faced criticism for producing error-laden code. A study from Purdue University revealed that ChatGPT’s responses on Stack Overflow were wrong 52% of the time, often presenting errors that were hard to detect due to the articulate nature of the responses. CriticGPT, built on OpenAI’s GPT-4 model, aims to identify and correct these mistakes. Initially, CriticGPT will assist AI trainers who review ChatGPT’s output, enhancing their ability to catch errors through reinforcement learning from human feedback (RLHF). OpenAI reports that developers using CriticGPT outperform those without its help 60% of the time. However, CriticGPT currently handles only short answers and is not immune to hallucinations. Despite its limitations, OpenAI’s efforts with CriticGPT represent a positive step toward improving the reliability of AI-generated code.

OpenAI Unveils CriticGPT to Improve ChatGPT’s Coding Accuracy

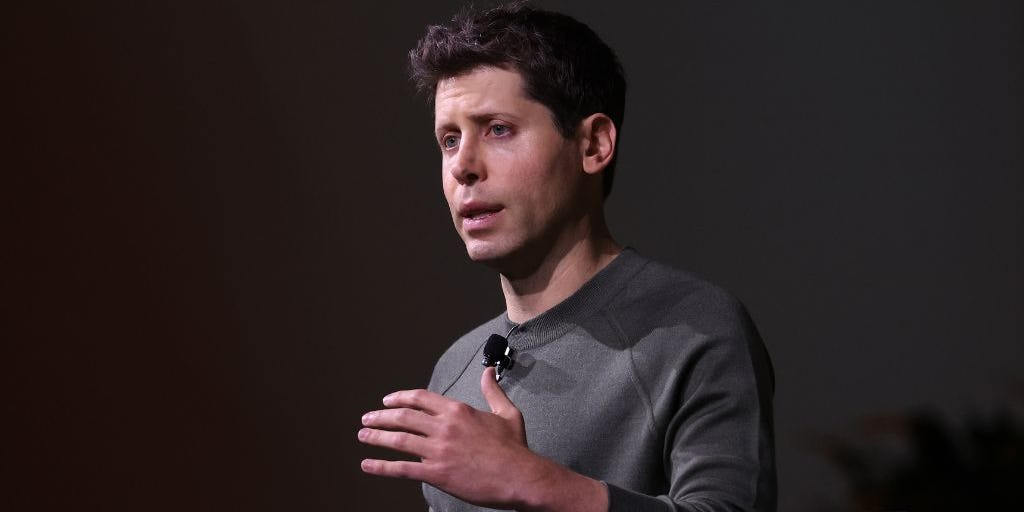

OpenAI aims to tackle coding errors in ChatGPT with the new CriticGPT model.

1–2 minutes