The development of autonomous weapons systems marks a critical juncture in military technology, raising profound ethical and security concerns. These weapons, capable of independently selecting and engaging targets without human intervention, are no longer confined to science fiction.

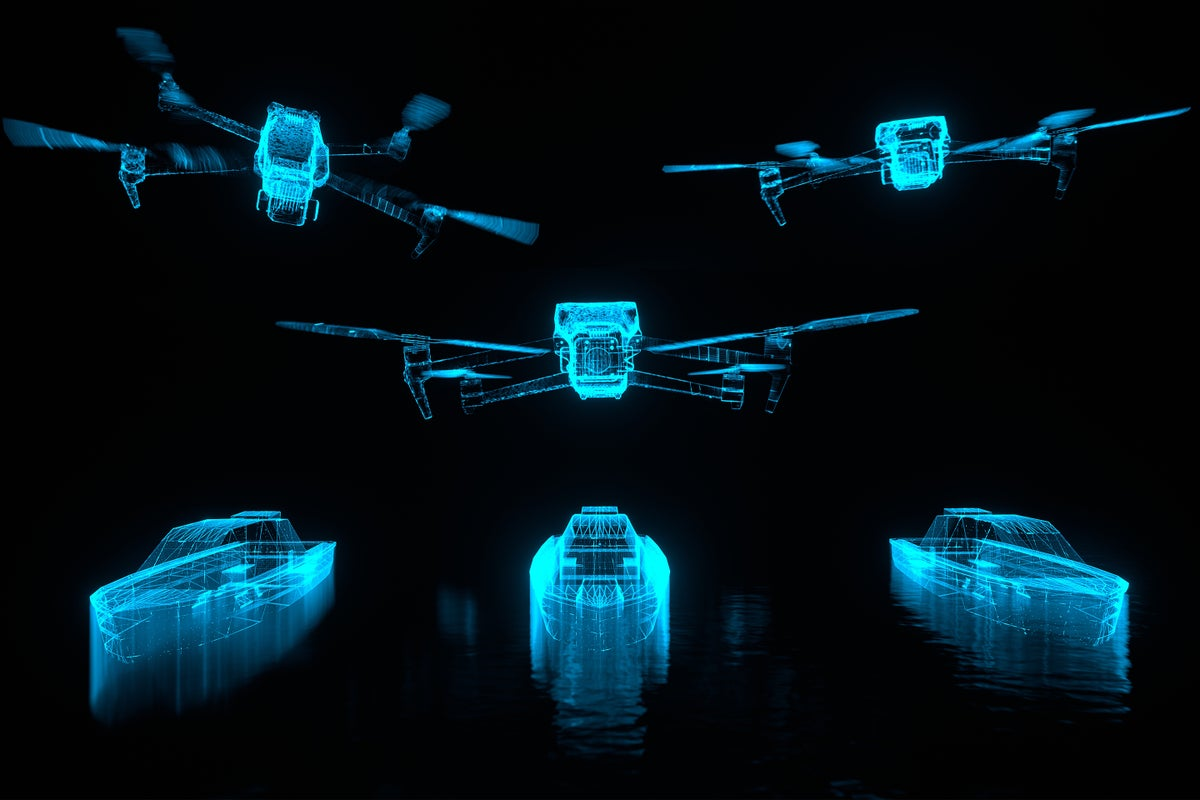

Autonomous weapons systems are rapidly evolving, with some already in use. These range from unmanned drones to AI-powered databases for target identification. The international community is grappling with the ethical implications and potential consequences of allowing machines to make life-and-death decisions in warfare.

Main points:

- Urgent need for international regulations to ensure human control over autonomous weapons

- Existing autonomous weapons include drones, loitering munitions, and AI-powered targeting systems

- Major powers like the U.S. are investing heavily in military AI projects, despite recognizing the associated risks

- Efforts by organizations like Human Rights Watch and the UN to establish prohibitions and restrictions on autonomous weapons

The proliferation of autonomous weapons systems could lead to a new arms race, fundamentally changing the nature of warfare. Without proper regulation, these weapons pose significant risks to civilian populations and could potentially escalate conflicts beyond human control. The current moment is crucial for establishing international norms and regulations to govern the development and use of autonomous weapons, ensuring that human judgment remains central in matters of life and death.