The Rise of AI-Driven Fraud

Warren Buffett’s prediction about AI scams becoming the next major “growth industry” highlights the transformative impact of artificial intelligence on cybercrime. Gone are the days of easily identifiable phishing attempts with obvious errors. Today’s AI-powered scams are sophisticated, hyper-personalized, and often indistinguishable from legitimate communications.

Key Developments in AI Scams

- Generative AI enables criminals to automatically analyze individual profiles and create highly targeted scams.

- Dark web services like FraudGPT and WormGPT facilitate the creation of meticulously crafted attacks.

- AI-generated scams incorporate specific details about victims’ work, relationships, and personal lives.

- Traditional defenses are being eroded by the specificity and relevance of these sophisticated scams.

Combating the Threat

To address this evolving threat landscape, there is an urgent need for robust human defenses capable of detecting and mitigating AI-driven scams. Constella’s ScamGPT solution aims to educate users by generating AI scams similar to those created by fraudsters. This approach helps build human defenses and trains users to detect real scam attacks in the future.

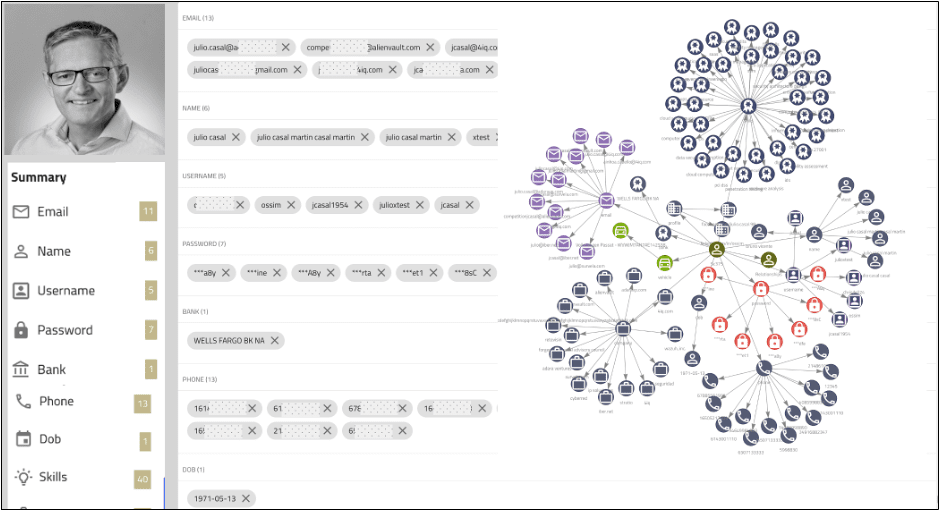

The ScamGPT system utilizes a vast data lake and proprietary AI profiling engine to gather detailed information about potential victims. It then uses this information to generate highly convincing scams, demonstrating the level of sophistication that cybercriminals can achieve with AI tools.

As AI continues to evolve, its application in both legitimate and criminal activities will expand. The rise of AI-driven scams underscores the importance of building strong user and employee defenses through awareness and training. By understanding the capabilities and risks associated with AI in cybercrime, organizations and individuals can better prepare themselves to navigate this complex and rapidly changing digital landscape.