Understanding the Breakthrough

Generative Large Language Models (LLMs) are increasingly vital in various applications, but their energy consumption and carbon footprint are significant concerns. The complexity of LLM inference clusters presents challenges in managing energy use while meeting strict performance standards. Researchers from the University of Illinois and Microsoft have developed a framework called DynamoLLM. This innovative system aims to optimize energy efficiency in LLM inference environments by dynamically adjusting compute resources based on workload variations.

Key Features of DynamoLLM

- DynamoLLM can save up to 53% of energy typically used by LLM inference clusters.

- It reduces operational carbon emissions by 38% while maintaining performance standards.

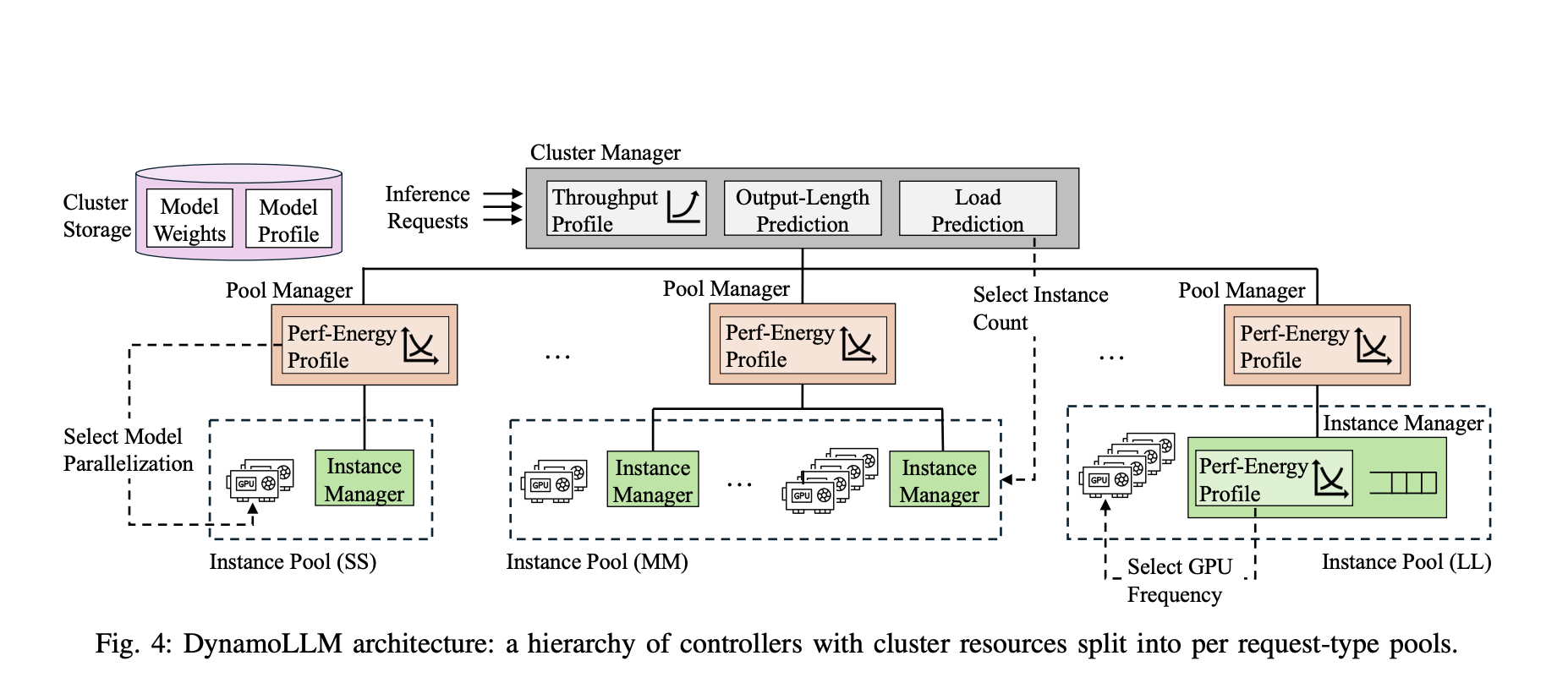

- The framework adjusts configurations in real-time, optimizing the number of model instances, model parallelism, and GPU operation frequency.

- Consumer costs can be cut by 61%, making LLM services more affordable without sacrificing quality.

Significance of the Innovation

DynamoLLM represents a crucial step toward sustainable AI practices. By addressing both financial and environmental challenges, this framework not only enhances the efficiency of LLMs but also contributes to reducing their carbon footprint. As AI continues to evolve, such innovations are essential for promoting responsible technology use, ensuring that advancements benefit both users and the planet.