Understanding AI Bias and Reasoning Models

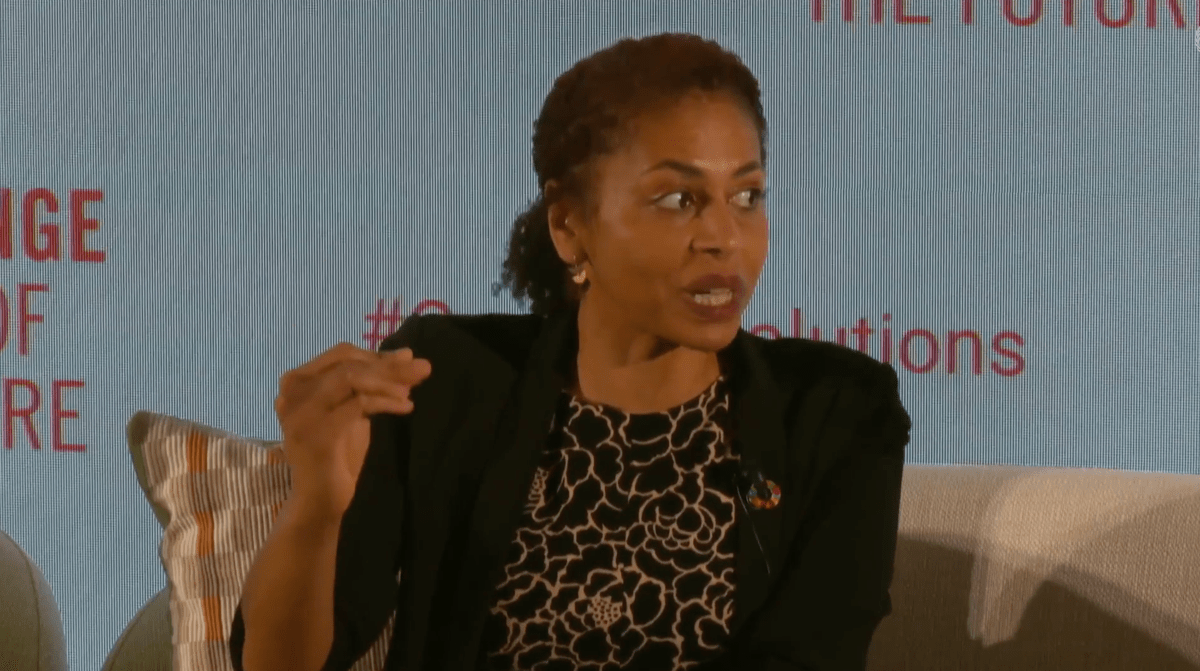

Anna Makanju, OpenAI’s VP of global affairs, recently discussed the potential of reasoning models like o1 at the UN’s Summit of the Future. She believes these models could significantly reduce bias in AI by self-assessing their responses. Makanju highlighted that o1 can analyze its reasoning and identify flaws, potentially leading to more accurate and less biased answers.

Key Insights on o1’s Performance

- OpenAI’s testing shows that o1 generally produces fewer toxic or biased responses than non-reasoning models.

- However, o1 did not perform consistently better than GPT-4o in all bias tests. In some cases, it showed higher explicit discrimination based on age and race.

- A smaller version, o1-mini, performed worse than GPT-4o, showing higher rates of explicit discrimination.

- The reasoning models are slower and more expensive than existing models, which could limit their accessibility.

The Bigger Picture: Future of AI and Bias

The effectiveness of reasoning models like o1 in minimizing bias is promising but not without challenges. While they show potential, significant improvements are needed in speed and cost-effectiveness. If reasoning models are to become mainstream, they must demonstrate clear advantages beyond just bias reduction. Otherwise, only those with substantial resources will be able to utilize these advanced models. This raises important questions about equity in access to AI technology and the ongoing struggle against bias in artificial intelligence.