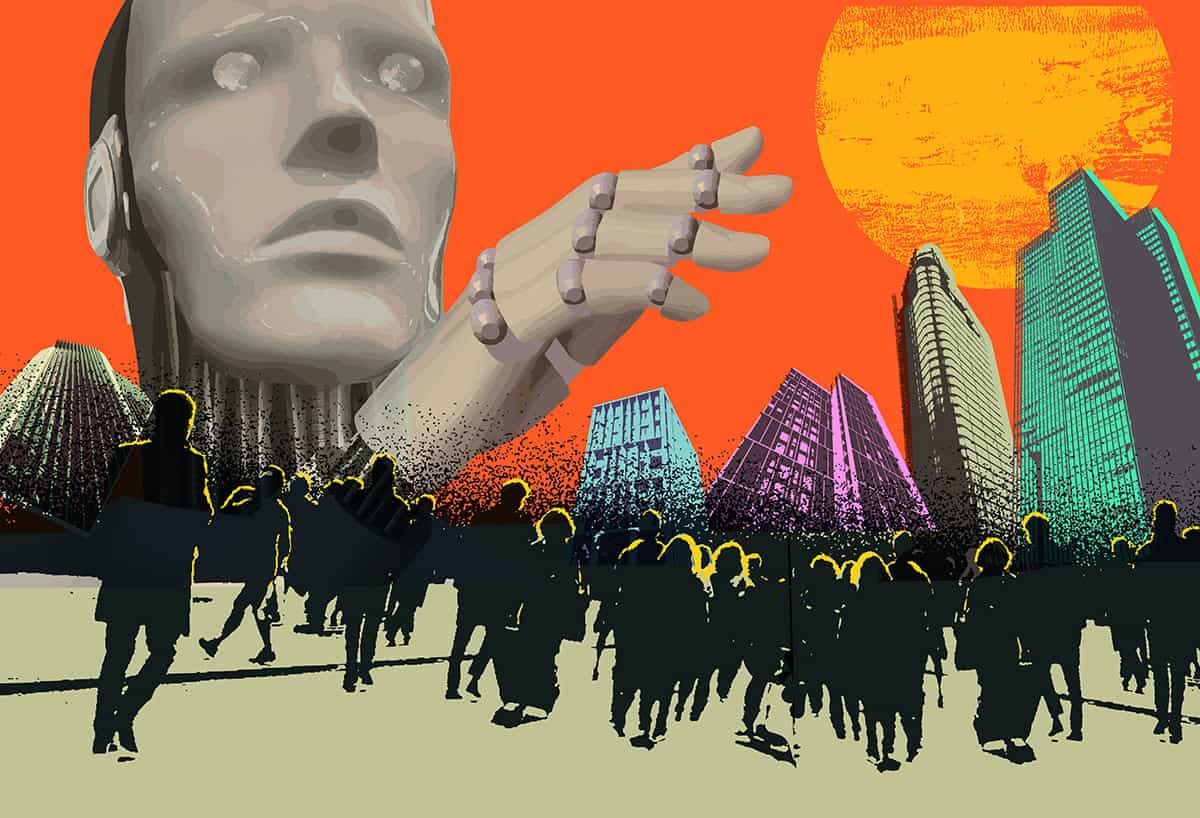

Understanding the Impact of Generative AI

Generative AI is changing how science is communicated, but it also raises concerns about accuracy and misinformation. The technology has recently been recognized with the 2024 Nobel Prize for Physics due to its contributions to research. However, incidents like the Cosmos magazine’s AI-generated articles show the potential pitfalls of relying on AI for scientific writing. Critics argue that AI can produce inaccurate content and undermine traditional journalism, leading to a decline in quality.

Key Insights

- Generative AI can create new content from minimal input, but it may also generate inaccuracies, known as “hallucinations.”

- Tools like ChatGPT have gained immense popularity, but they can misinform users by producing incorrect information.

- AI can enhance accessibility in education by offering interactive learning experiences tailored to individual needs.

- The use of AI in science communication necessitates careful fact-checking and transparency to maintain credibility.

The Bigger Picture

The integration of generative AI into science communication is both promising and challenging. While it has the potential to make scientific knowledge more accessible and engaging, it also risks perpetuating misinformation. As science communicators navigate this new landscape, they must balance the benefits of AI with the need for accuracy and integrity. The future of science journalism depends on how effectively these tools are incorporated while ensuring that the public remains informed and engaged.