Understanding the Shift in AI Development

The tech industry is witnessing a significant change in how artificial intelligence (AI) progress is perceived. The once-dominant scaling law, which suggested that larger AI models trained on more data would lead to smarter systems, is losing its grip. Initially, this concept drove rapid advancements in AI, especially after the launch of ChatGPT. However, recent models from major companies like OpenAI and Google are not achieving the anticipated improvements, leading experts to rethink their approach.

Key Insights on Current Trends

- The scaling law is no longer yielding expected results, especially in the pre-training phase of AI models.

- Industry leaders acknowledge that further development is needed beyond initial training to maintain progress.

- New definitions of scaling are emerging, focusing on reasoning capabilities rather than just size.

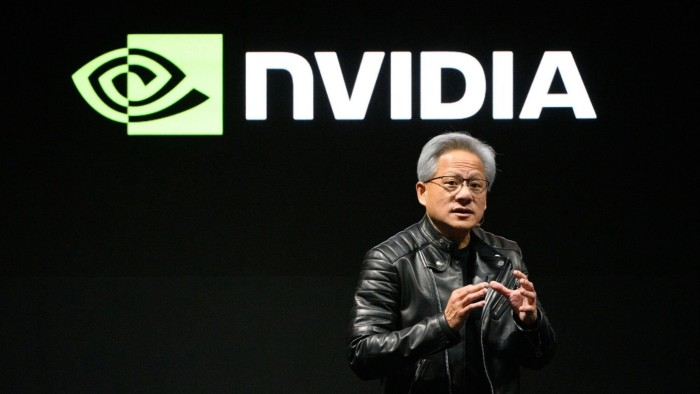

- Nvidia, heavily invested in chip production for AI, faces potential challenges if the scaling law falters.

The Bigger Picture: Implications for the Future

This shift in AI development raises concerns for Nvidia, which has thrived on the scaling law’s principles. As the industry grapples with the need for practical AI applications, Nvidia’s future hinges on Big Tech achieving meaningful outcomes from their investments. If the trend of “bigger is better” in AI fades, it may lead to a reevaluation of spending and strategies in the tech sector, impacting Nvidia’s market position and growth.