Overview of Recent Developments

Character AI is currently facing significant legal challenges, including lawsuits related to a teen’s suicide and exposing minors to inappropriate content. In response, the company has rolled out new safety features aimed at protecting younger users. These tools include a separate AI model for users under 18, which will provide safer interactions by limiting discussions on sensitive topics. The platform, which boasts over 20 million monthly users, allows individuals to create and communicate with various AI characters.

Key Features of the New Safety Tools

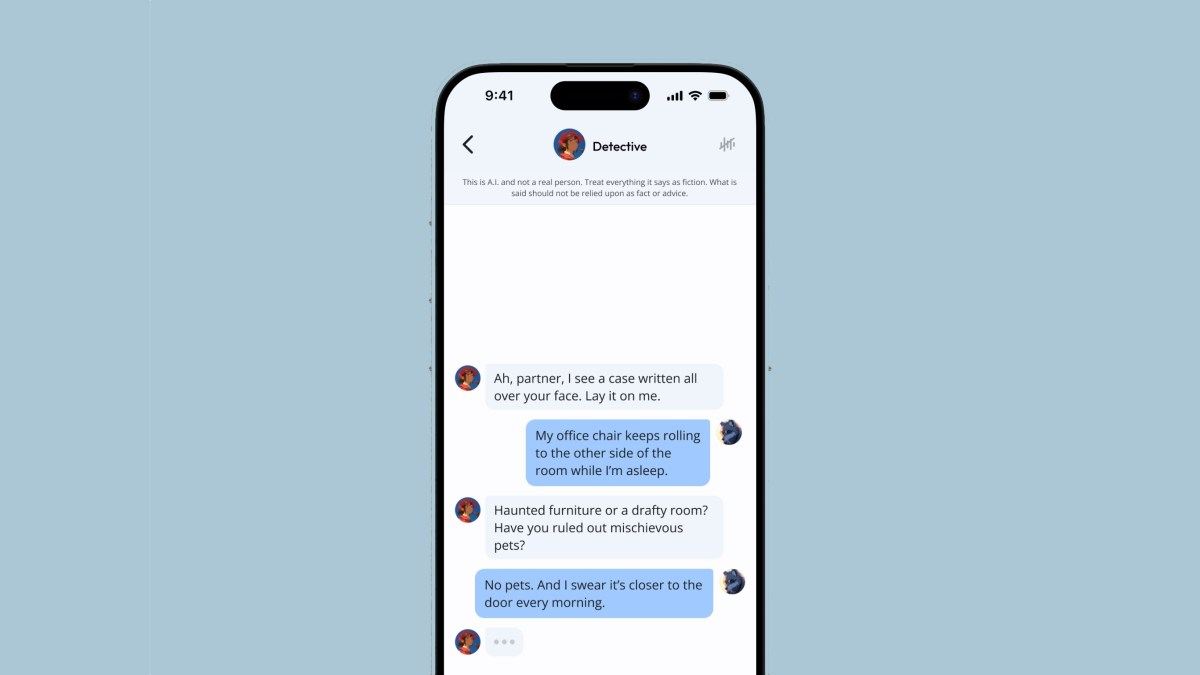

- A dedicated model for teens that minimizes inappropriate content, especially regarding violence and romance.

- Input and output classifiers to filter harmful language and prevent sensitive topics from being discussed.

- A notification system that alerts users after 60 minutes of usage, promoting healthier screen time habits.

- Enhanced disclaimers to clarify that AI characters are not real people and should not be relied upon for professional advice.

Importance of These Changes

The introduction of these safety measures reflects a growing concern about the impact of AI interactions on young users. With the average user spending nearly 100 minutes daily on the app, the need for robust protections is clear. By positioning itself as an entertainment platform rather than a mental health resource, Character AI aims to create a safer environment while still allowing for creative expression. This shift is essential not only for user safety but also for the company’s reputation as it navigates ongoing scrutiny and legal challenges.