Understanding the Energy Landscape

The future of technology increasingly intertwines with energy management, especially as AI systems become more prevalent. As we rely more on AI for various tasks, understanding its energy consumption is vital. A recent talk by Vijay Gadepally highlighted the importance of conserving energy while using AI and data centers. This discussion is crucial as it brings attention to how we power our digital tools and the environmental impact they have.

Key Principles for Energy Conservation

- Assess Energy Impact: Gain insight into how much power AI operations consume.

- On-Demand Power: Provide energy only when necessary to avoid waste.

- Optimize Computing Budgets: Focus on essential tasks to minimize energy use.

- Utilize Smaller Models: Implement smaller, efficient models for less demanding tasks.

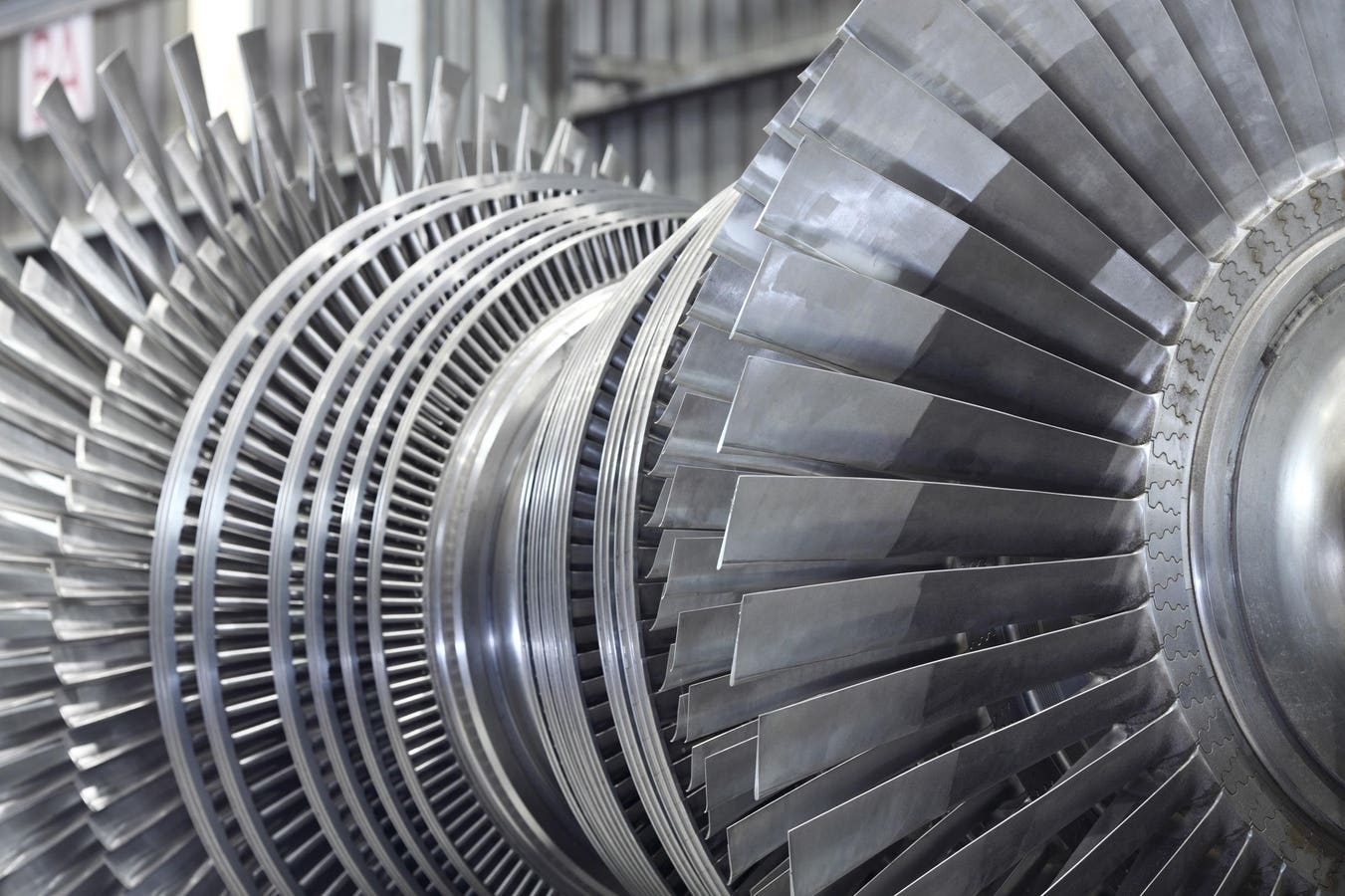

- Sustainable Systems: Design energy systems that reduce loss during transmission and rely on renewable sources.

The Bigger Picture

Addressing the energy needs of AI is not just about efficiency; it is about sustainability and environmental responsibility. As AI applications expand, their energy consumption will only increase, making it crucial to adopt strategies that minimize their ecological footprint. This approach not only benefits the planet but also helps organizations cut costs and improve operational efficiency. Embracing these energy-saving principles will be essential as we navigate an increasingly AI-driven world.