Exploring AI’s Inner Workings

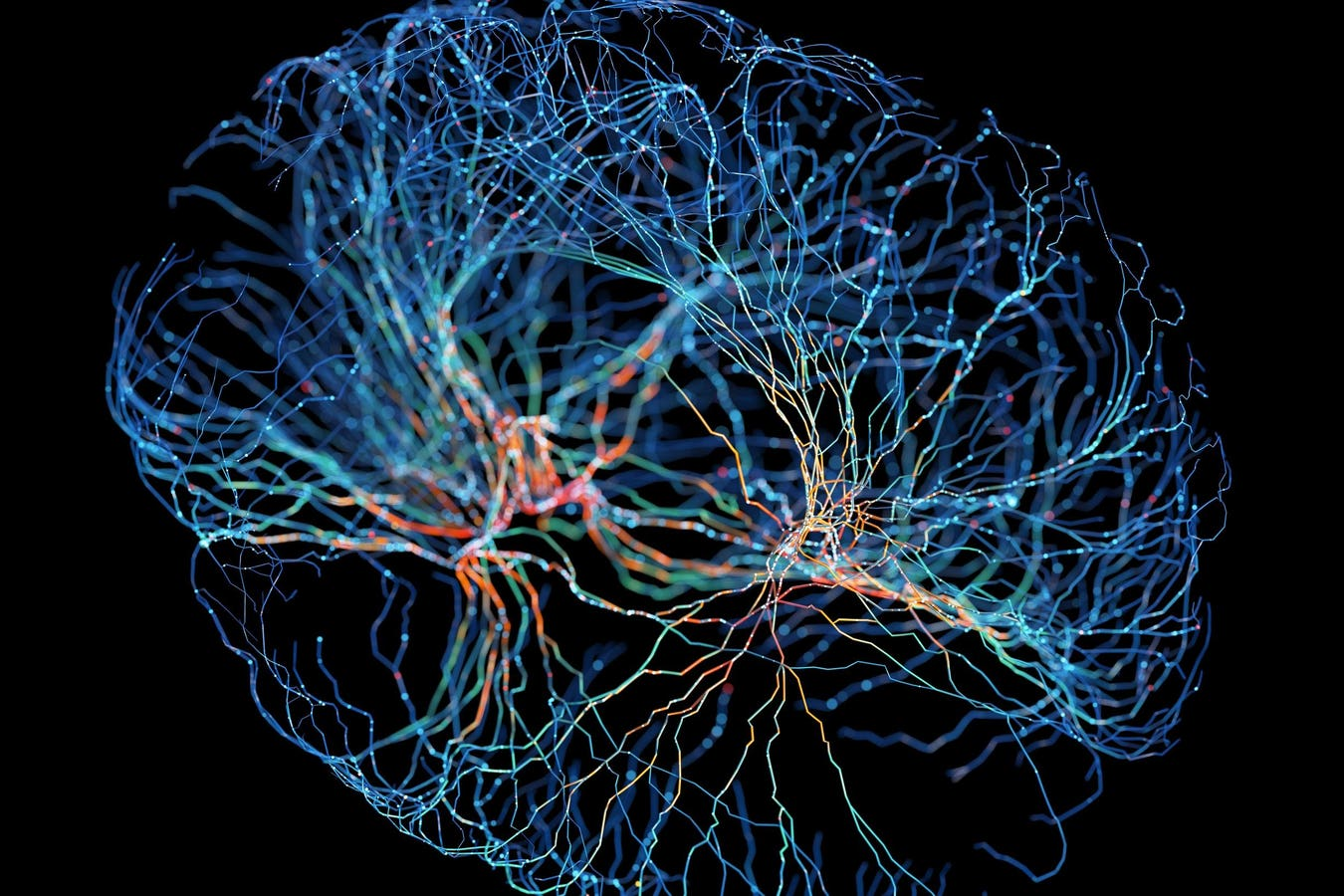

The rapid rise of artificial intelligence (AI) technology has created a knowledge gap among the general public regarding how these systems operate. Many people express confusion or indifference about the workings of large language models (LLMs) and neural networks. This lack of understanding is concerning, given AI’s significant impact on society and personal lives. Insights from renowned computer scientist Yann LeCun shed light on the complexities of AI development, emphasizing that true intelligence is not a simple linear progression but a network of interconnected systems working together.

Key Takeaways

- AI is not a single powerful entity but a collection of smaller interconnected systems, similar to a “society of mind.”

- True intelligence involves various skills and the ability to learn quickly, rather than just accumulating power.

- Persistent memory is crucial for AI systems, which can be categorized into parametric (long-term) and working (short-term) memory.

- A new architectural framework is needed for AI to develop common sense and contextual understanding, moving beyond current LLM capabilities.

The Bigger Picture

Understanding the intricacies of AI development is essential as we navigate its growing influence. The distinction between open-source and proprietary models will shape the future of AI innovation. LeCun’s insights encourage a shift in perspective, prompting us to rethink how we approach AI and its potential. As AI continues to evolve, grasping its fundamentals will be vital for harnessing its benefits and addressing its challenges effectively.