Understanding the Innovation

Google DeepMind has made a significant advancement by integrating its multimodal language model, Gemini 2.0, into robotic systems. This new technology equips robots with an advanced AI brain that allows them to comprehend and interact with the physical world in a more human-like manner. Generative AI enables these robots to perform tasks without prior training, simply by understanding everyday instructions given in natural language.

Key Features of Gemini 2.0

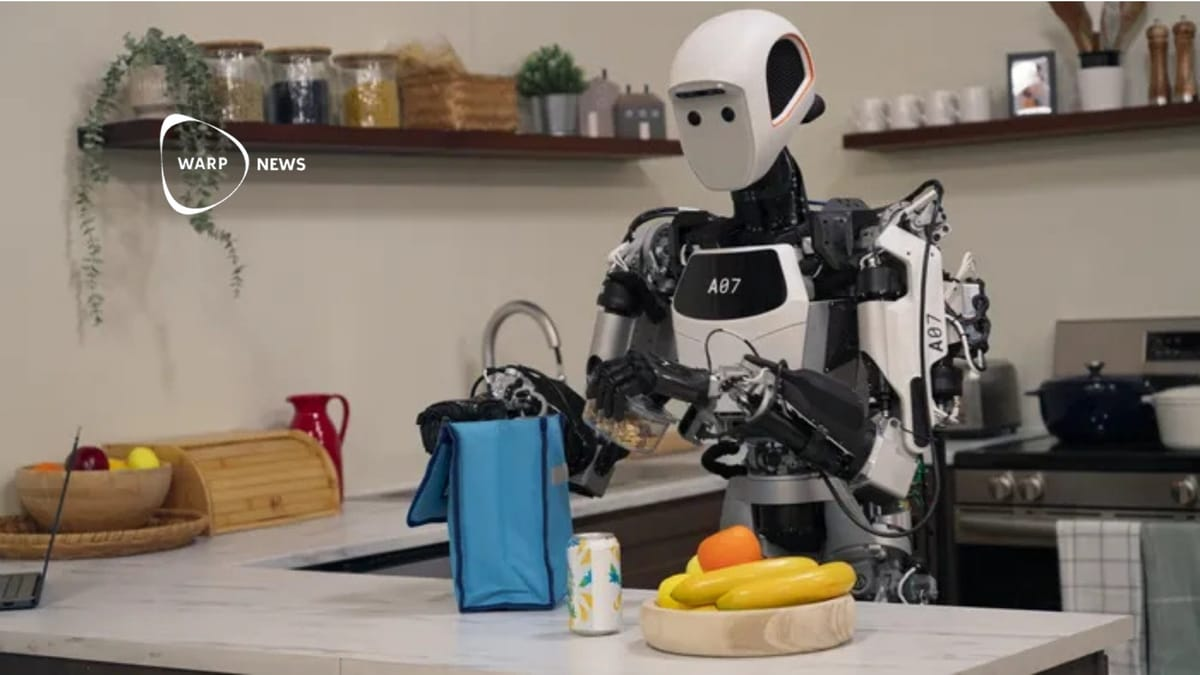

- Robots can now complete tasks such as packing snacks or folding origami by interpreting instructions.

- They can quickly adapt when faced with unexpected challenges, like dropping an object.

- The model supports multilingual commands, making interaction more intuitive.

- Robots can safely grip objects, like coffee mugs, by understanding their structure and function.

The Bigger Picture

This development is crucial for the future of robotics. By enhancing generality, interactivity, and dexterity, Gemini 2.0 allows robots to tackle new challenges more effectively. The ability to adapt to various platforms means that this technology can be applied across different robotic systems, expanding their usability in real-world applications. As generative AI continues to evolve, it holds the potential to transform industries, making robots more versatile and capable of performing complex tasks that require a deeper understanding of their environment.