Understanding the Shift to Smaller Models

Large language models (LLMs) have gained popularity due to their vast number of parameters, which enhance their ability to identify patterns. However, the training of these models requires significant computational resources, making them costly and energy-intensive. In response, tech giants like IBM, Google, Microsoft, and OpenAI are developing small language models (SLMs) that use only a few billion parameters. These smaller models are not designed for general use but excel in specific tasks, such as chatbots and data collection, and can run on everyday devices like laptops and smartphones.

Key Details About Small Language Models

- SLMs typically max out at around 10 billion parameters, significantly fewer than LLMs.

- They can be trained using high-quality data generated by larger models through a process called knowledge distillation.

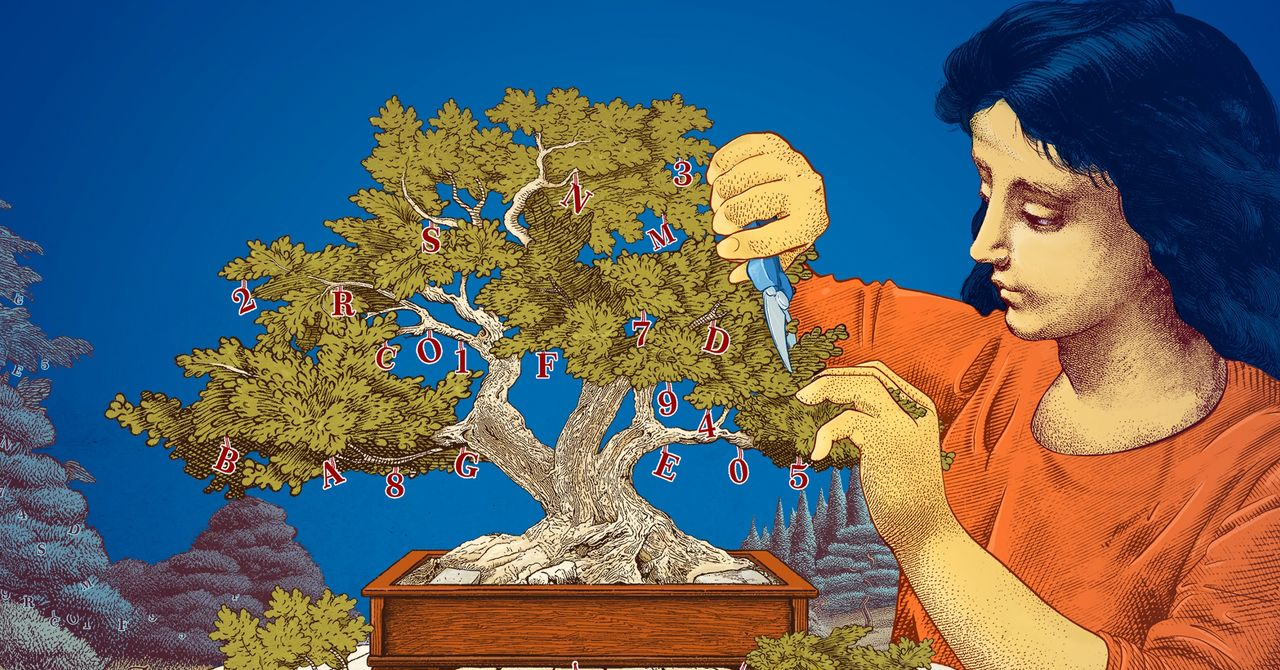

- Pruning techniques allow researchers to remove unnecessary parts of neural networks, improving efficiency without sacrificing performance.

- Smaller models provide a cost-effective platform for researchers to experiment with new ideas and improve transparency in reasoning.

Why This Matters

The development of small language models signifies a shift towards efficiency and accessibility in AI technology. While large models will continue to serve complex applications, SLMs offer a practical solution for many everyday tasks. Their lower resource requirements can lead to significant savings in time and costs, making advanced AI tools more accessible to a broader audience. This evolution in AI could democratize technology, allowing more researchers and developers to innovate without the burden of high computational demands.