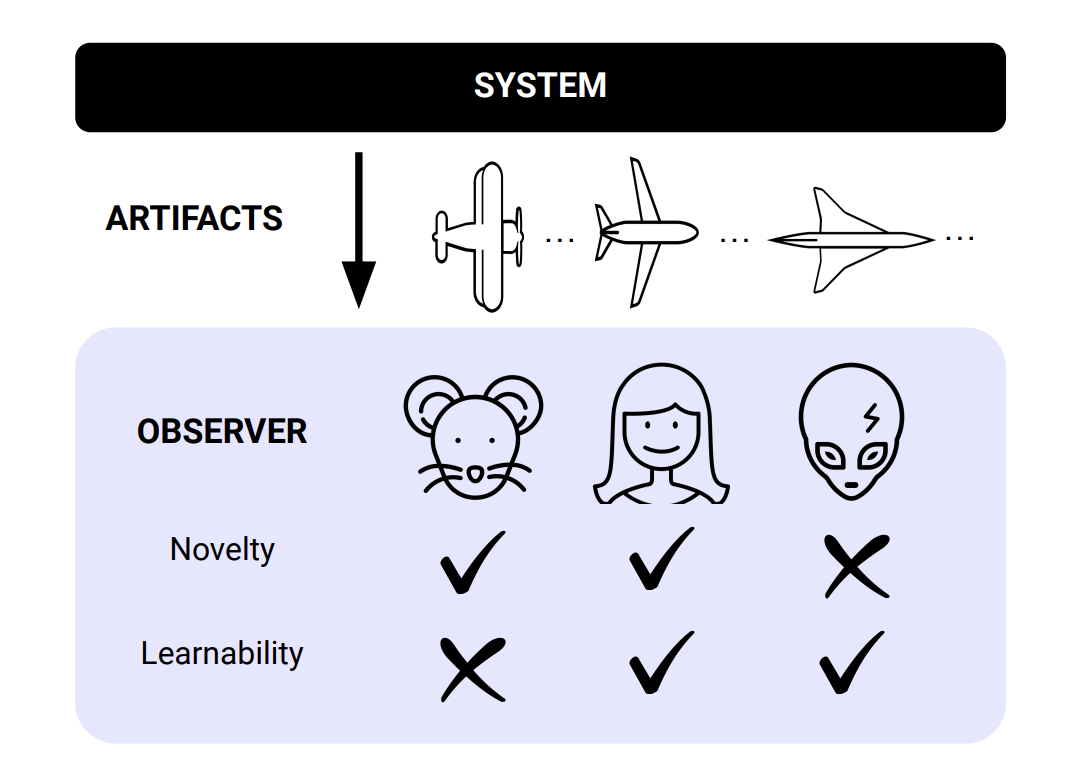

The pursuit of artificial general intelligence (AGI) has made significant strides in recent years, but a critical component remains elusive: the ability of autonomous systems to self-improve towards increasingly creative and diverse discoveries. Researchers at DeepMind propose a concrete formal definition of open-endedness in AI systems, which could be the key to unlocking AGI. They argue that open-endedness, enabled by the combination of foundation models and open-ended algorithms, is essential for any ASI system to continuously expand its capabilities and knowledge. The researchers’ definition of open-endedness involves a system producing a sequence of artifacts that are both novel and learnable, with novelty defined as increasing unpredictability and learnability requiring conditioning on past artifacts to make future ones more predictable. This formal definition quantifies the key intuition that an open-ended system endlessly generates artifacts that are both novel and meaningful to the observer.

In my opinion, this research marks a significant step towards achieving true AGI. By combining foundation models with open-ended algorithms, we may finally be able to create AI systems that can continuously learn and improve themselves, leading to exponential growth in knowledge and capabilities. However, as the researchers caution, this immense power also comes with significant safety risks that must be addressed hand-in-hand with developing open-endedness.