The article highlights the limitations of large language models (LLMs) in providing precise and accurate answers to questions. The author argues that LLMs are probabilistic systems that cannot guarantee accurate answers, unlike databases that provide precise factual information. While LLMs can generate responses that “look like” good answers, they may not always be correct. The author suggests that this limitation is not a reason to dismiss LLMs, but rather to recognize their strengths and weaknesses. The article proposes two approaches to addressing this issue: treating it as a science problem, where the focus is on improving the models, or as a product problem, where the goal is to build useful products that work around the limitations of LLMs. The author emphasizes that the latter approach requires moving towards the user, rather than expecting users to adapt to the technology.

Unfair Test for LLMs

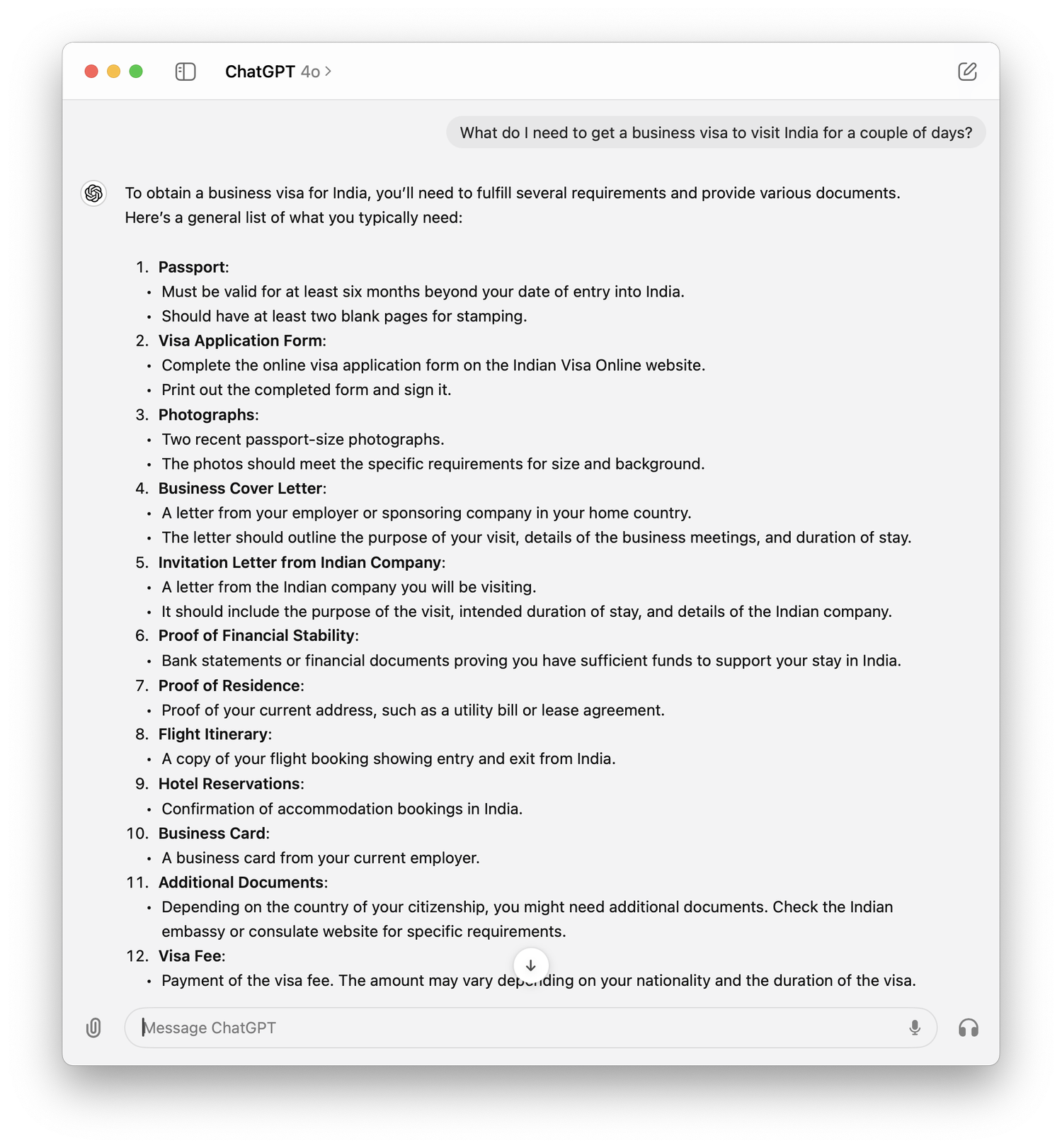

The answer might be right, but you can’t guarantee that.

1–2 minutes