This article discusses the groundbreaking achievement of a research team led by Mark Hamilton, an MIT PhD student, in developing an AI system called DenseAV that can learn human language from scratch without any text input. The system uses a novel approach called contrastive learning, which involves comparing pairs of audio and visual signals to find matches and non-matches, allowing it to discover the meaning of language without human intervention. The team trained DenseAV on 2 million YouTube videos and tested it on various tasks, outperforming other top models in identifying objects from their names and sounds. The system’s ability to learn language from audio and visual signals has far-reaching implications for understanding animal communication, learning from massive amounts of video data, and discovering patterns between other pairs of signals. The researchers’ innovative approach has the potential to revolutionize the field of natural language processing and machine learning.

DenseAV Breakthrough

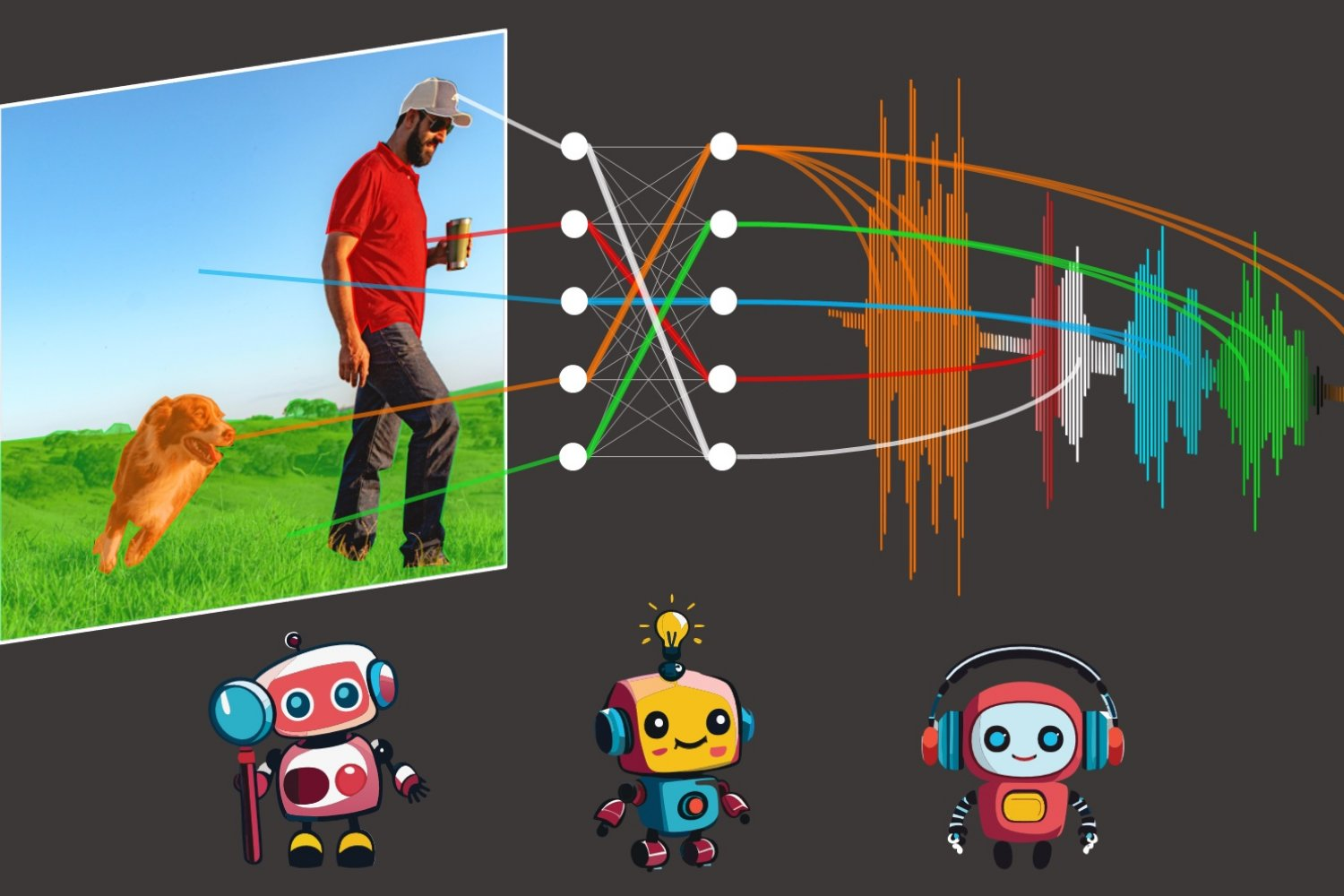

DenseAV aims to learn language by predicting what it’s seeing from what it’s hearing, and vice-versa.