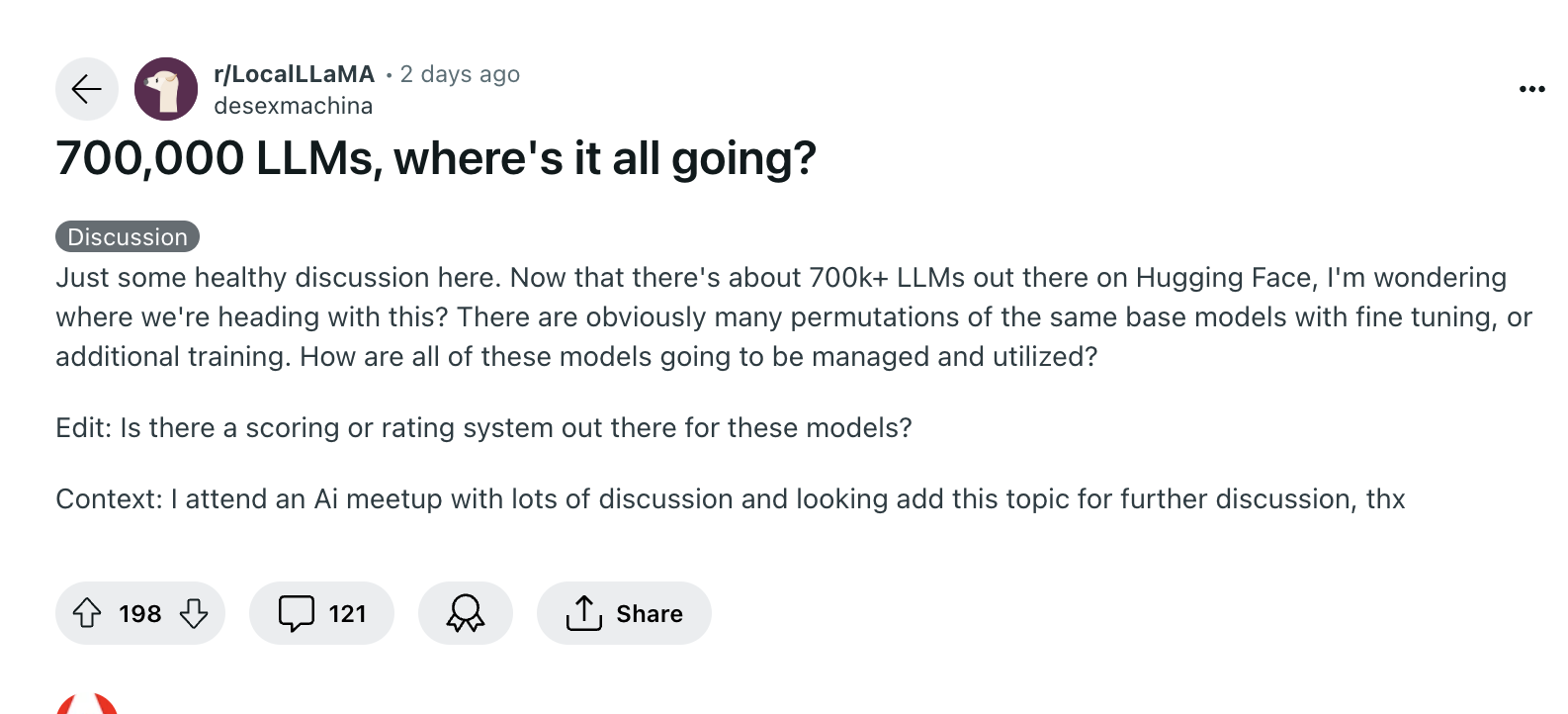

The proliferation of large language models (LLMs) on Hugging Face, a staggering 700,000 and counting, has sparked a heated debate within the Artificial Intelligence (AI) community. While some argue that the majority of these models are unnecessary or of poor quality, others see them as crucial stepping stones for future advancements. The Reddit discussion highlights the need for improved management and assessment systems, as the current lack of categorization and standardization makes it difficult to identify high-quality models. One user proposes a unique benchmarking method, comparing models to each other like intelligence exams, which could alleviate issues with data leaks and outdated benchmarks. As the field of AI continues to expand, striking a balance between promoting innovation and upholding quality is crucial. The debate raises important practical implications, such as the rapidly decreasing value of deep learning models as new ones emerge, and the need for a dynamic environment where models must continuously adapt to remain relevant.

AI Model Overload

The multiplication of models is a crucial component of exploration, and shouldn’t be written off as a waste of time or money.

1–2 minutes