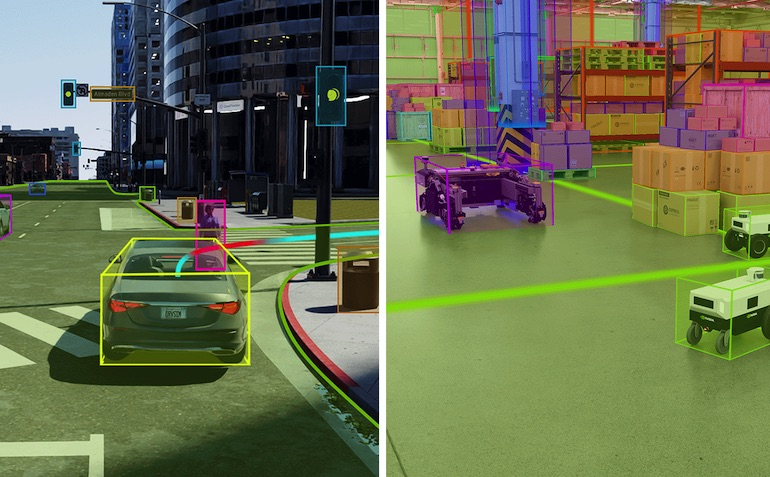

NVIDIA has announced the release of Omniverse Cloud Sensor RTX, a set of microservices that enable physically accurate sensor simulation to accelerate the development of autonomous machines. This technology allows developers to test sensor perception and associated AI software in realistic virtual environments before real-world deployment, enhancing safety while saving time and costs. The microservices are built on the OpenUSD framework and powered by NVIDIA RTX ray-tracing and neural-rendering technologies.

NVIDIA researchers are also presenting 50 research projects around visual generative AI at the Computer Vision and Pattern Recognition conference, including new techniques to create and interpret images, videos, and 3D environments. The company has created its largest indoor synthetic dataset with Omniverse for the AI City Challenge.

The Omniverse Cloud Sensor RTX microservices will enable developers to easily build large-scale digital twins of factories, cities, and even Earth, helping accelerate the next wave of AI. The company’s researchers have also presented papers on object pose estimation, text-to-image models, and visual language models, among others, showcasing the potential of generative AI in various industries.