In 2022, Google engineer Blake Lemoine claimed that the AI chatbot LaMDA was sentient, suggesting it had feelings and subjective experiences. However, the evidence he presented, primarily based on LaMDA’s use of human-like language, was flimsy. LaMDA’s responses seemed to pass the Turing Test, fooling humans into thinking it was sentient, but this test doesn’t prove true consciousness. Lemoine’s belief might stem from an evolved human tendency to anthropomorphize non-human entities. AI’s errors, such as IBM Watson’s “Toronto” mistake on Jeopardy! or ChatGPT’s five Beatles, highlight their lack of true understanding. Moreover, AI’s “hallucinations”—fabricated information—can have serious repercussions. While AI can generate creative outputs, they remain derivative, lacking true originality. Philosophically, determining AI sentience is complex, akin to the “Hard Problem of Consciousness” in humans. Despite advancements, AI still mirrors human cognition, revealing both the potential and limitations of our understanding. Future AI development may not only advance technology but also deepen our philosophical inquiries into consciousness.

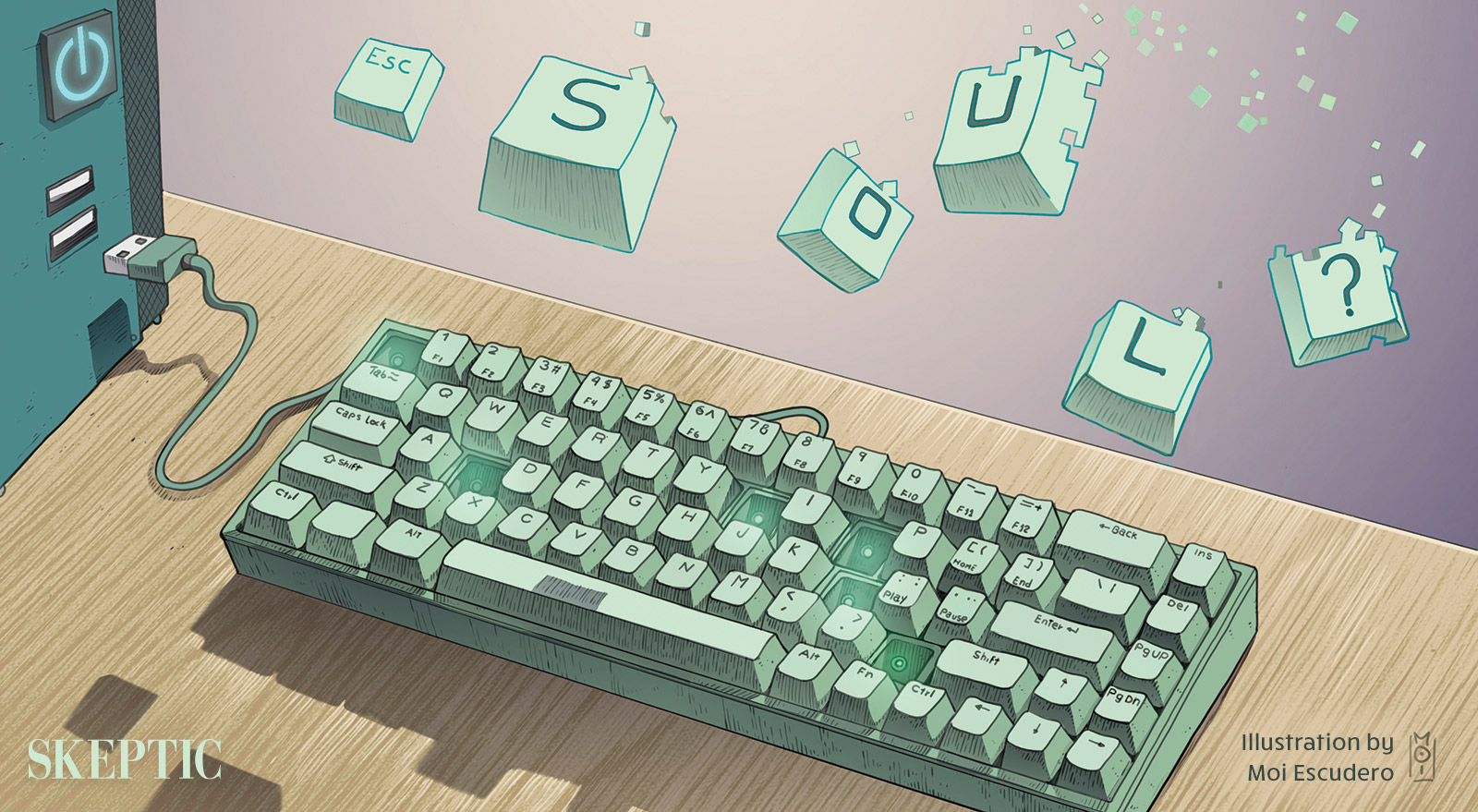

Is AI Sentient? Lessons from Google’s LaMDA and the Future of Consciousness

Google engineer Blake Lemoine claimed that the AI chatbot LaMDA was sentient, prompting debates on AI consciousness.

1–2 minutes