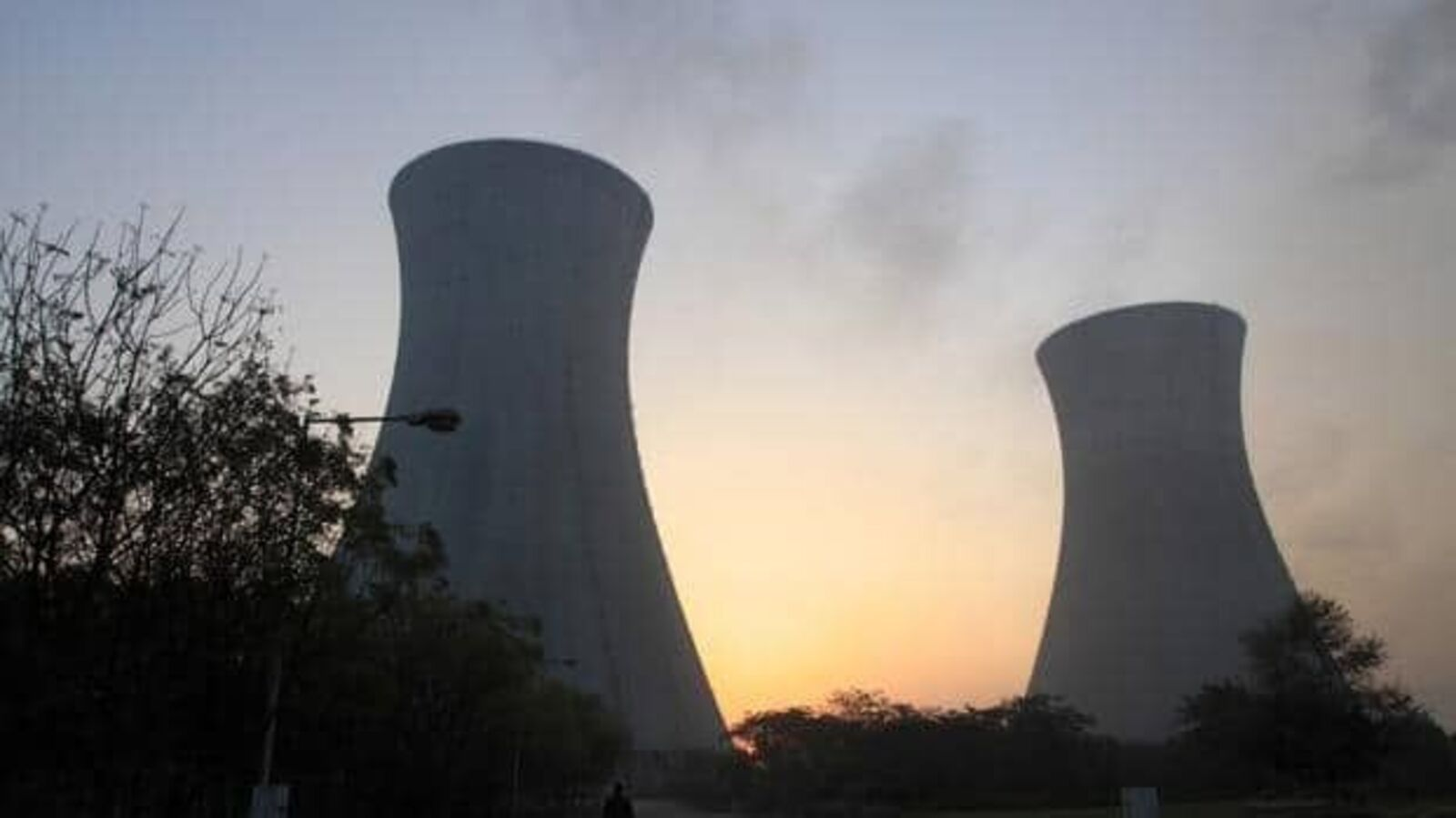

The rise of artificial intelligence (AI) has propelled Nvidia to become the world’s biggest company, but this growth comes with a hefty environmental price tag. Data centres, which house powerful AI chips, are consuming increasing amounts of energy and emitting significant carbon dioxide. Projections indicate that data centres will consume 8% of US power by 2030, up from 3% in 2022, illustrating a 160% rise in energy demand. While AI firms often promote their technology as solutions to climate issues, their current operations are exacerbating the problem. Utilities are extending coal plant operations, and companies like Microsoft are investing in gas and nuclear facilities to meet power needs.

Generative AI models like ChatGPT and Claude are particularly energy-intensive, requiring substantial computational power that leads to high energy consumption. However, efforts to mitigate this issue are underway. For instance, Microsoft has developed a ‘1 bit’ architecture that enhances the energy efficiency of large language models by simplifying their calculations. Similarly, Nvidia has created a new chip format to reduce power usage. Despite these advancements, the AI industry remains in an arms race, with companies like OpenAI and Anthropic investing billions in server infrastructure rather than energy-efficient technologies.

Efforts to reduce energy consumption are crucial, as evidenced by past successes in data centre efficiency between 2015 and 2019. Industry leaders must prioritize developing more energy-efficient models rather than relying on future energy solutions like nuclear fusion, which remains far from commercialization. Immediate action in optimizing AI algorithms can significantly reduce the environmental impact, aligning the industry’s growth with sustainable practices.