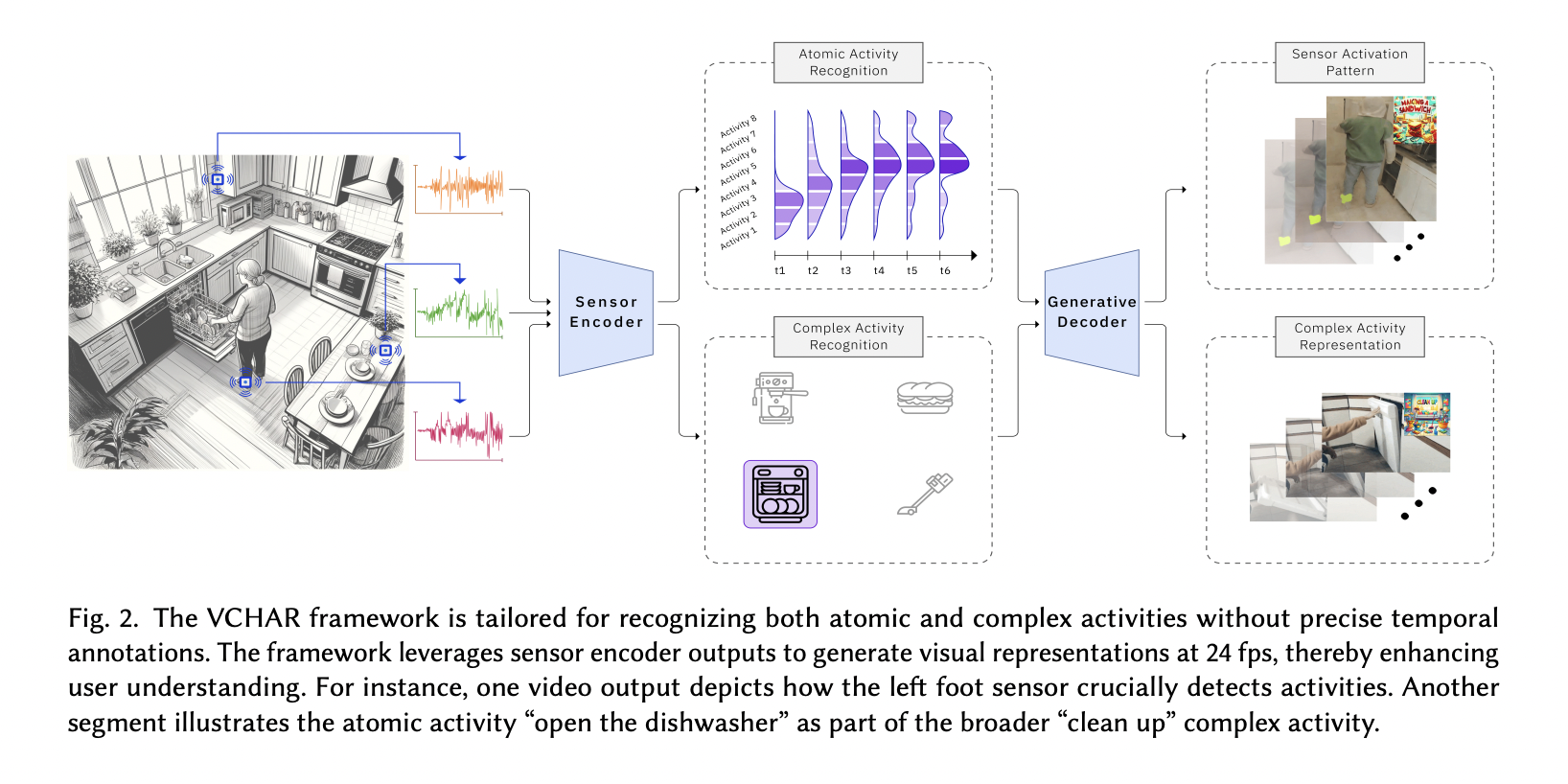

VCHAR is a novel framework for Complex Human Activity Recognition in smart environments. It addresses the challenges of labor-intensive data labeling and improves accuracy without requiring precise temporal information.

Key points:

- VCHAR uses a generative approach, treating atomic activities as distributions over time intervals.

- It employs Kullback-Leibler divergence to approximate activity distributions.

- The framework includes a generative decoder that creates visual representations of activities and sensor data.

- VCHAR integrates Language and Vision-Language Models to generate comprehensive visual outputs.

VCHAR matters because it enhances activity recognition in real-world scenarios, making it more accessible and interpretable for non-experts. This advancement has significant implications for applications in healthcare, elderly care, and surveillance, potentially improving the effectiveness of smart environments across various domains.