Transforming Language Agent Development

The field of artificial intelligence is witnessing a paradigm shift in the development of language agents. Traditionally, these agents were created through manual task decomposition and engineering-centric approaches, limiting their adaptability and robustness. Now, researchers are pushing for a transition to a more data-centric learning paradigm, aiming to enhance the versatility and applicability of language agents across diverse tasks and data distributions.

Key Innovations and Challenges

- Current methods, including prompt-based and search-based approaches, face limitations in optimizing complex real-world tasks.

- Existing techniques struggle to holistically optimize entire agent systems, often leading to local optima.

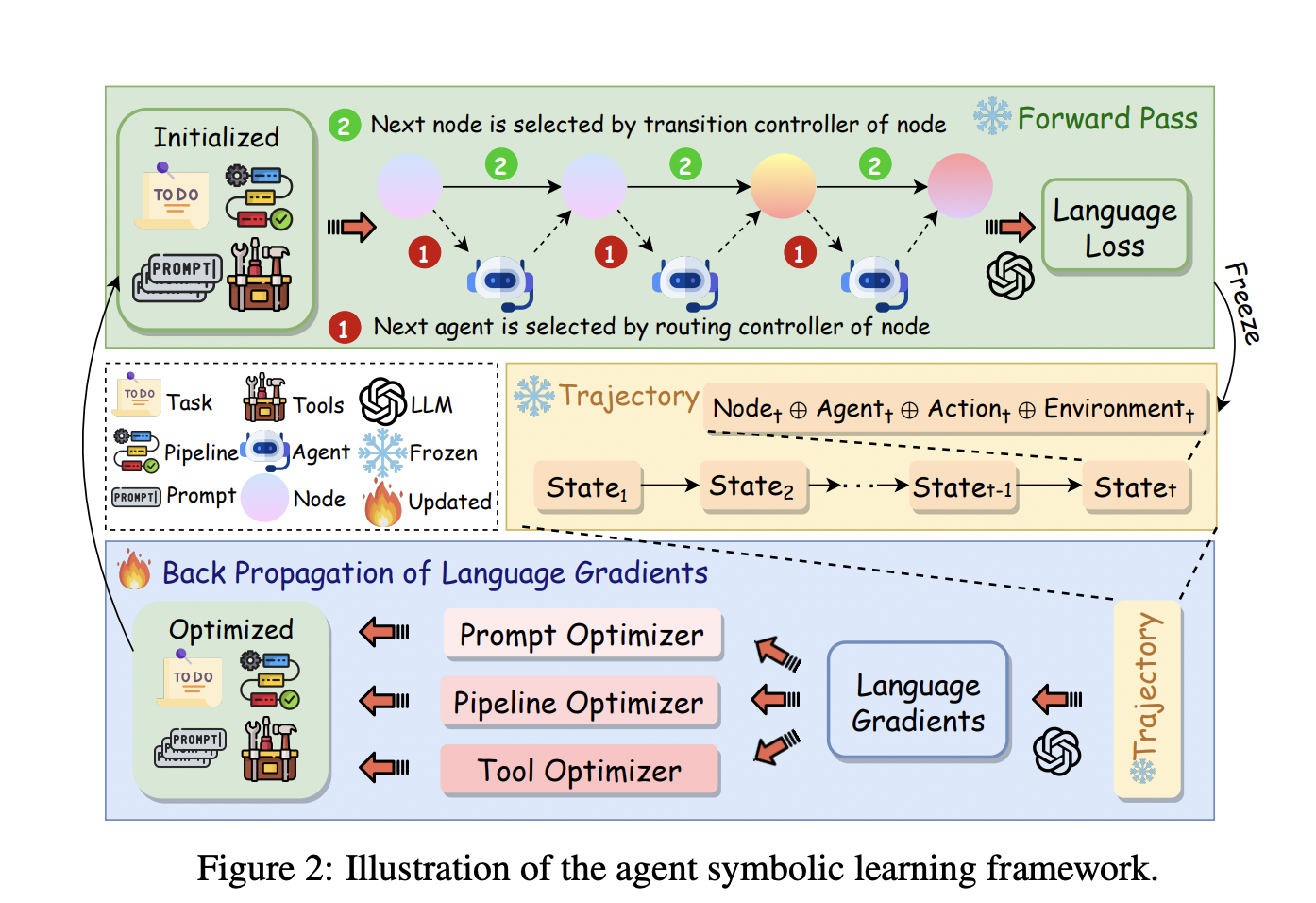

- The new agent symbolic learning framework draws inspiration from neural network learning, mapping agent components to neural network elements.

- This framework introduces concepts like “language loss” and “language gradients” to guide comprehensive optimization of all symbolic components.

Implications and Future Prospects

The agent symbolic learning framework represents a significant advancement in language agent research. By enabling comprehensive optimization and self-evolution of agents post-deployment, it paves the way for more adaptable and efficient AI systems. This approach has demonstrated superior performance across various benchmarks, including complex tasks in software development and creative writing. As the field moves towards data-centric agent research, this framework could play a crucial role in the journey towards artificial general intelligence, potentially revolutionizing how we develop and apply language agents in real-world scenarios.