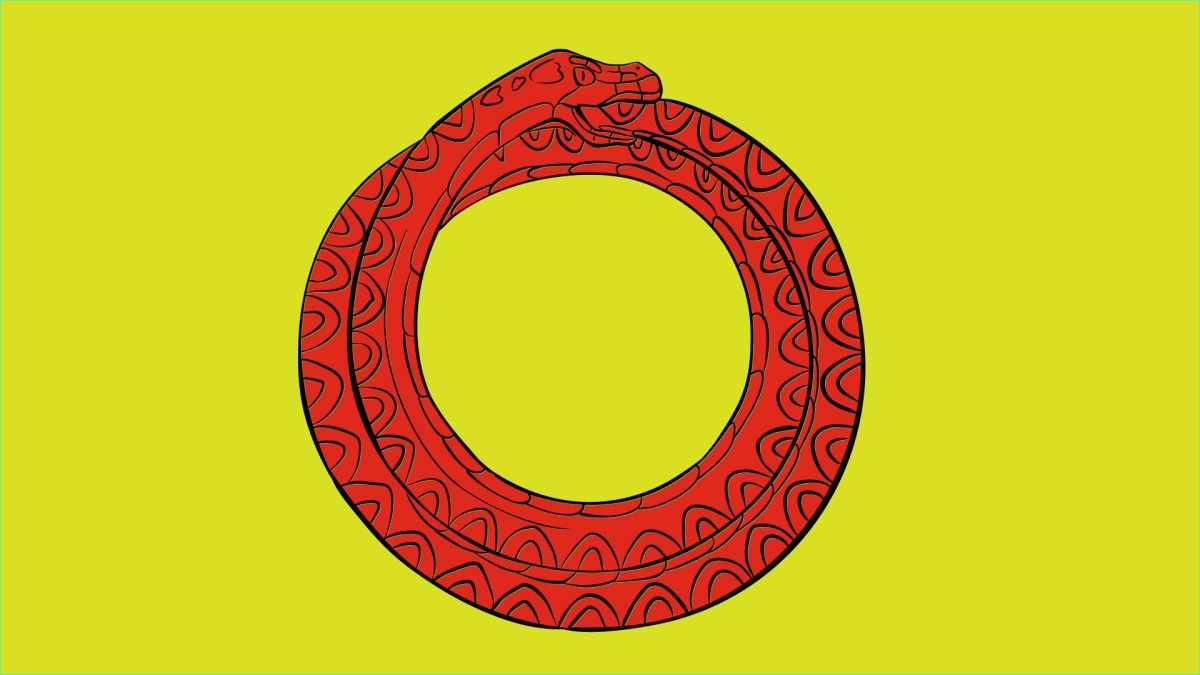

The Ouroboros Effect in AI

A new study published in Nature reveals a concerning phenomenon in artificial intelligence: model collapse. This degenerative process occurs when AI models are repeatedly trained on data generated by other AI models, causing them to progressively lose touch with the true underlying data distribution.

Key Insights:

- AI models naturally gravitate towards producing common, middle-of-the-road outputs

- The internet is increasingly flooded with AI-generated content

- New AI models trained on this content risk amplifying biases and losing diversity

- Over time, this cycle can lead to models becoming “weirder and dumber” until they collapse

Implications for AI Development

This discovery poses significant challenges for the AI industry. As high-quality, diverse training data becomes increasingly crucial for model performance, the risk of model collapse could fundamentally limit the potential of current AI technologies. Companies may be incentivized to hoard original, human-generated data, potentially creating a “first mover advantage” in the AI space.

Mitigating model collapse will require innovative solutions, such as:

- Developing qualitative and quantitative benchmarks for data sourcing and variety

- Creating effective watermarking techniques for AI-generated content

- Implementing strategies to preserve and prioritize human-generated data

The study underscores the importance of addressing this issue to sustain the benefits of large-scale web-scraped training data and highlights the increasing value of genuine human-generated content in the age of AI.