The Rise of AI in Table Tennis

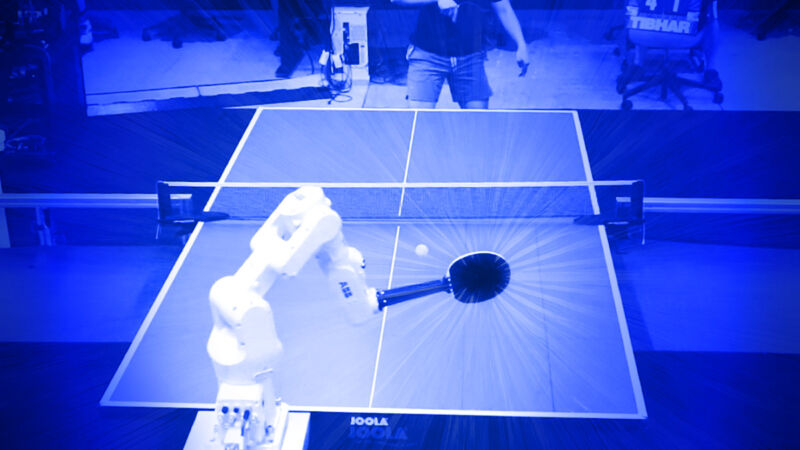

Google DeepMind has developed a robotic table tennis player that has achieved a “solidly amateur” level of skill when competing against human opponents. This advancement represents a significant step forward in the field of robotics and artificial intelligence, as table tennis requires a complex combination of speed, precision, and strategic decision-making. The robot’s performance demonstrates the potential for AI systems to master tasks that were once thought to be exclusively human domains.

Key Developments and Challenges

- The AI-controlled robot arm successfully defeated all beginner players and about 55% of intermediate players in matches against 29 human opponents.

- Advanced players still outperformed the robot, indicating room for improvement in its capabilities.

- The system combines low-level skill controllers for specific techniques with a high-level strategic decision-maker that adapts to opponents’ styles.

- Researchers used a hybrid training approach, combining reinforcement learning in simulated environments with real-world data from approximately 17,500 ball trajectories.

Implications for Robotics and AI

This achievement in robotic table tennis has broader implications for the field of artificial intelligence and robotics. It showcases the potential for AI systems to master complex physical tasks that require rapid decision-making and precise motor control. The hybrid training approach used in this project could potentially be applied to other areas of robotics, enabling more efficient and effective learning processes. As AI continues to advance in sports and other domains, it may lead to new insights into human performance and open up possibilities for enhanced human-robot collaboration in various fields.

Sources: arstechnica.com, iflscience.com, livescience.com, techcrunch.com, technologyreview.com

Image Source: arstechnica.com