Overview of the Settlement

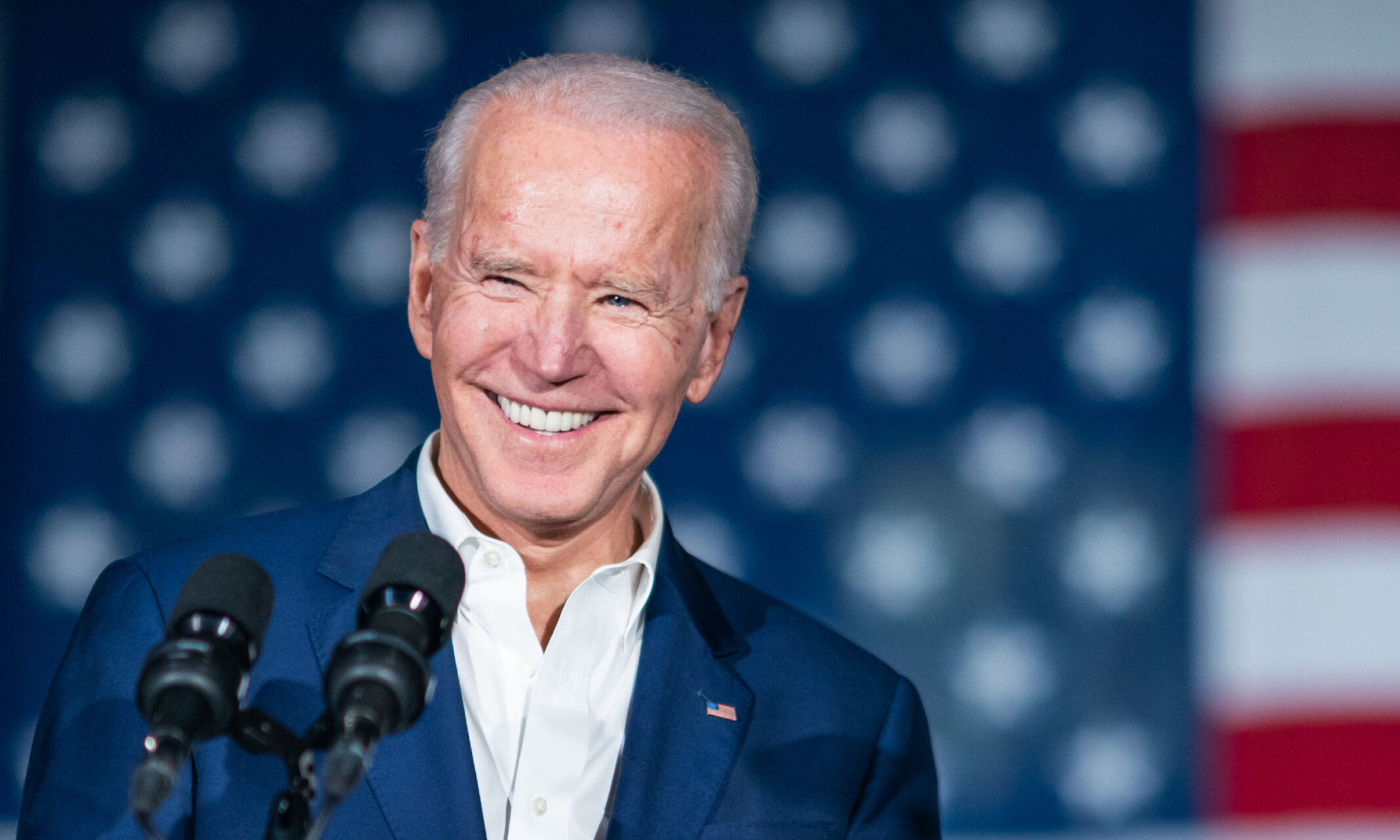

Lingo Telecom, a voice service provider, has settled with the Federal Communications Commission (FCC) regarding fake AI-generated robocalls impersonating President Joe Biden. The settlement includes a $1 million fine, reduced from the FCC’s initial demand of $2 million. The investigation began in January 2024 when New Hampshire officials discovered calls that misled voters about the primary elections, urging them to skip voting in favor of the November election. The caller ID displayed a prominent Democrat’s personal number, leading to a formal complaint.

Key Details of the Case

- The robocalls used AI voice cloning to mimic Biden’s voice, spreading disinformation.

- The FCC has mandated Lingo Telecom to adhere to strict caller ID authentication rules.

- A political consultant, Steve Kramer, orchestrated these calls and faces separate legal actions for voter suppression.

- The FCC’s settlement includes a historic compliance plan to prevent future misuse of AI in communications.

Significance of the Issue

This case highlights the potential dangers of AI technology in influencing democracy and voter behavior. The FCC’s actions aim to restore trust in communication networks and protect citizens from deceptive practices. With the rise of AI, ensuring transparency in political messaging is crucial for maintaining the integrity of elections. The outcome of this case may set a precedent for how AI-generated content is regulated in the future, emphasizing the need for accountability among service providers in safeguarding democratic processes.