Understanding the Challenge

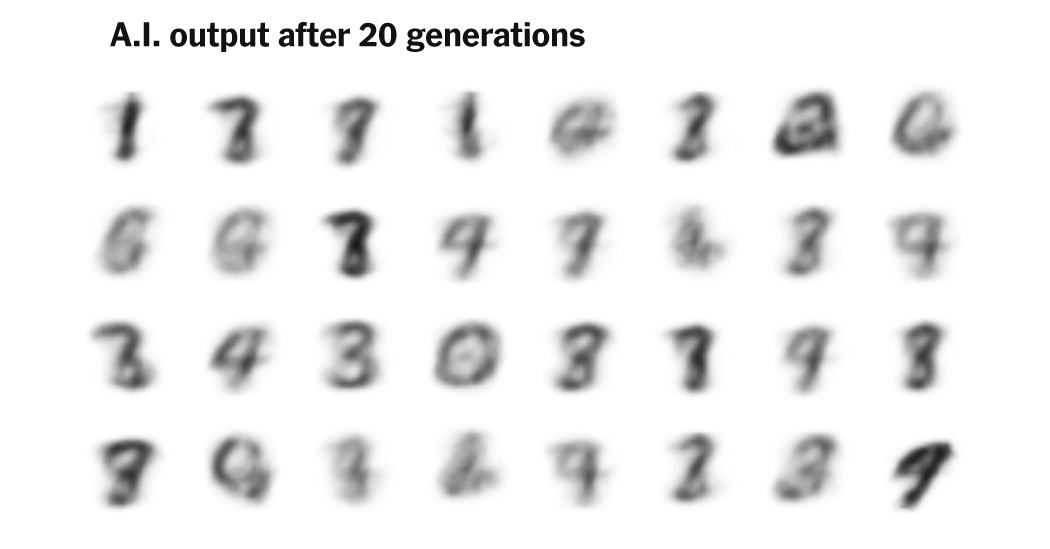

A.I.-generated content is flooding the internet, making it difficult to discern what is real. This surge poses a significant risk to future A.I. systems, as they may inadvertently learn from their own flawed outputs. When A.I. models are trained on their own generated data, they can enter a cycle of degradation known as “model collapse,” where the quality and diversity of their output diminishes over time. This phenomenon can lead to less accurate information, as seen with the potential decline in medical or educational A.I. systems that rely on distorted data.

Key Points to Note

- The internet is inundated with A.I.-generated text and images, complicating data quality.

- Training A.I. on its own output leads to repetitive, lower-quality results.

- Research shows that A.I. trained on its own data produces similar outputs, risking loss of diversity.

- High-quality, human-sourced data is essential to maintain A.I.’s effectiveness and reliability.

The Bigger Picture

The ongoing challenge of A.I. content generation emphasizes the need for diverse and high-quality data. Without it, A.I. systems may struggle to perform accurately and could perpetuate biases. Companies must invest in sourcing real, human-generated data to avoid these pitfalls. As A.I. continues to evolve, understanding the implications of training models on synthetic data is crucial to prevent further decline in output quality and diversity. This awareness is vital for the future development of A.I. technologies that can serve society effectively.