Understanding the Concerns of Innovators

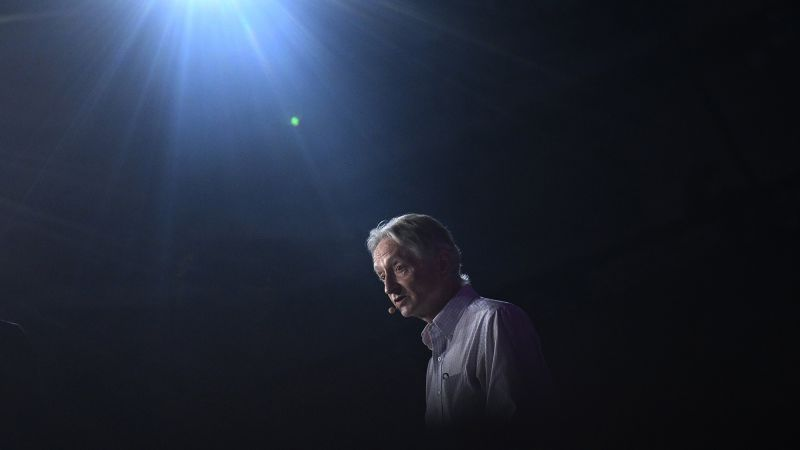

Geoffrey Hinton, a leading figure in machine learning, recently won the Nobel Prize in physics. He expressed serious concerns about artificial intelligence, suggesting it could surpass human intellect and lead to unforeseen consequences. Hinton’s warnings echo the sentiments of other Nobel laureates who have cautioned against the potential dangers of their groundbreaking discoveries, ranging from nuclear weapons to antibiotic resistance and genetic engineering.

Key Points to Consider

- Hinton believes AI could revolutionize productivity but warns of risks like losing control to super-intelligent systems.

- Historical figures like Irene Joliot-Curie and Alexander Fleming also raised alarms about their work leading to nuclear catastrophe and antibiotic resistance, respectively.

- Paul Berg acknowledged the risks of genetic engineering, emphasizing the need for careful management and public understanding.

- Jennifer Doudna highlighted the ethical implications of gene editing, particularly concerning human germline modifications, stressing the importance of responsible use.

The Broader Implications of Scientific Progress

The warnings from these laureates stress the need for caution as society embraces new technologies. While innovations can improve lives, they can also pose significant risks if not managed properly. The potential for misuse and unforeseen consequences calls for ongoing dialogue among scientists, policymakers, and the public. Understanding these risks is essential for ensuring that advancements benefit humanity without leading to harmful outcomes.