Overview of Innovations

Businesses are increasingly using generative AI technologies, but the costs associated with large language models (LLMs) can be significant. To tackle this challenge, AWS has introduced two new features for its Bedrock LLM hosting service: caching and intelligent prompt routing. These innovations aim to help companies save money while improving performance and response times.

Key Details

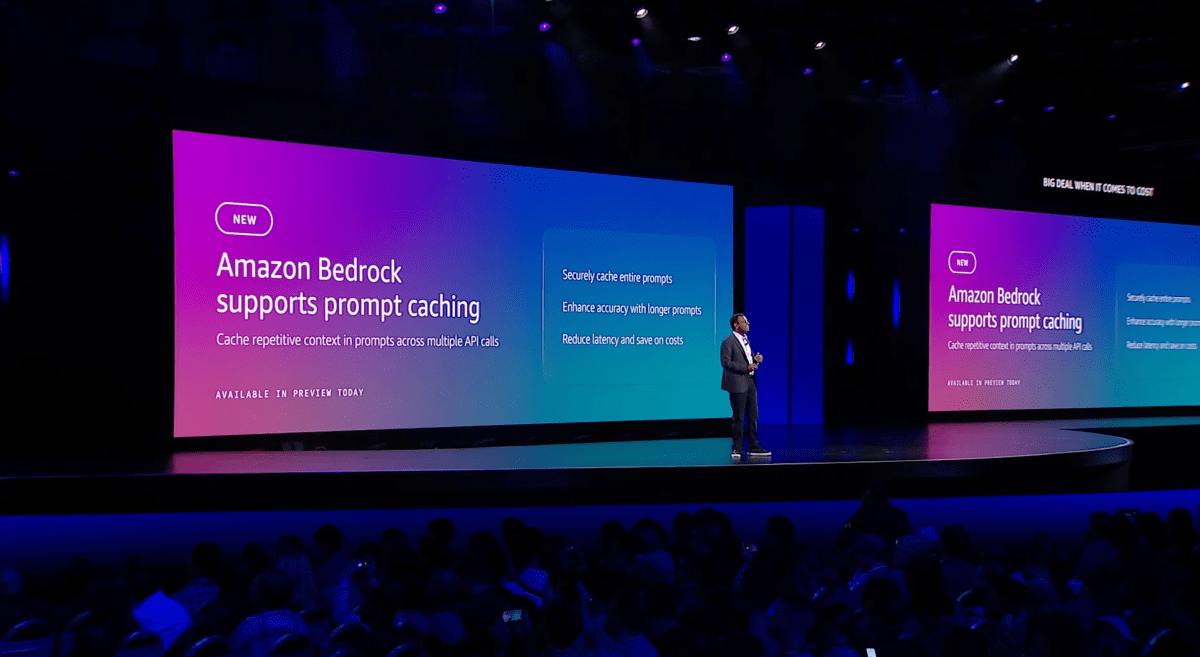

- Caching: This feature allows repeated queries on the same document to be processed without incurring additional costs. It can reduce expenses by up to 90% and improve response times by 85%.

- Intelligent Prompt Routing: Bedrock can now automatically direct queries to the most suitable model based on the complexity of the request. This feature ensures that simpler queries do not waste resources on expensive models.

- Marketplace Launch: AWS is creating a marketplace for specialized models that users can manage independently. This will provide access to around 100 emerging models catering to specific needs.

- Future Plans: AWS aims to expand its intelligent routing capabilities, allowing for greater customization and flexibility in model selection.

Significance of Developments

These advancements are crucial as businesses seek to optimize their use of generative AI while managing costs. By implementing caching and intelligent routing, AWS not only enhances the efficiency of LLMs but also supports a broader range of applications. The introduction of a marketplace for specialized models further enables companies to tailor their AI solutions to unique requirements, driving innovation and competitiveness in the industry.