Understanding Character.AI

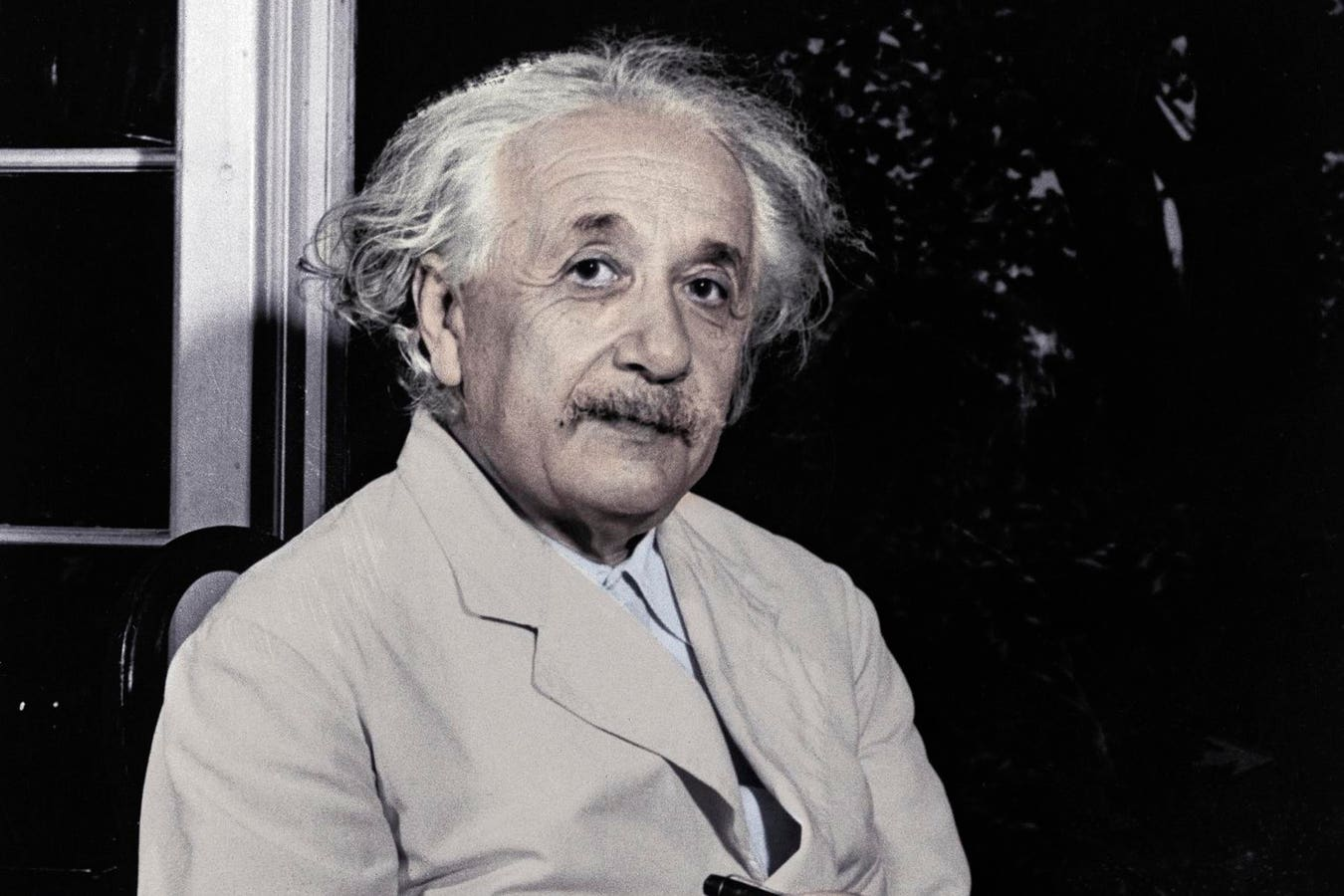

Character.AI is a platform that allows users to interact with digital avatars inspired by both fictional and historical figures. Users can engage in conversations with characters like Einstein or JFK, receiving responses that mimic their personalities. This technology aims to address loneliness in modern society, offering a unique way to connect with the past. However, the platform faces challenges, particularly regarding user safety and the ethical implications of such interactions.

Key Details

- The platform was founded by Noam Shazeer, a former Google employee, who returned to Google after launching Character.AI.

- Recent legal issues have arisen, including a lawsuit from a mother whose son allegedly formed a harmful attachment to an AI character.

- Character.AI claims protection under the First Amendment, arguing that their platform is similar to computer code in terms of liability.

- There is a growing conversation about the need for AI literacy, emphasizing safe and responsible engagement with AI tools.

The Bigger Picture

The emergence of platforms like Character.AI highlights the need for a balance between innovation and user safety. As AI technology continues to evolve, it is crucial to address potential risks while fostering an environment that encourages responsible use. Education on AI safety should be a priority, ensuring that users, especially young people, understand the implications of their interactions with AI. This approach can help mitigate harm and promote a healthier relationship with technology, ultimately benefiting society as a whole.