Understanding the Shift in AI Training

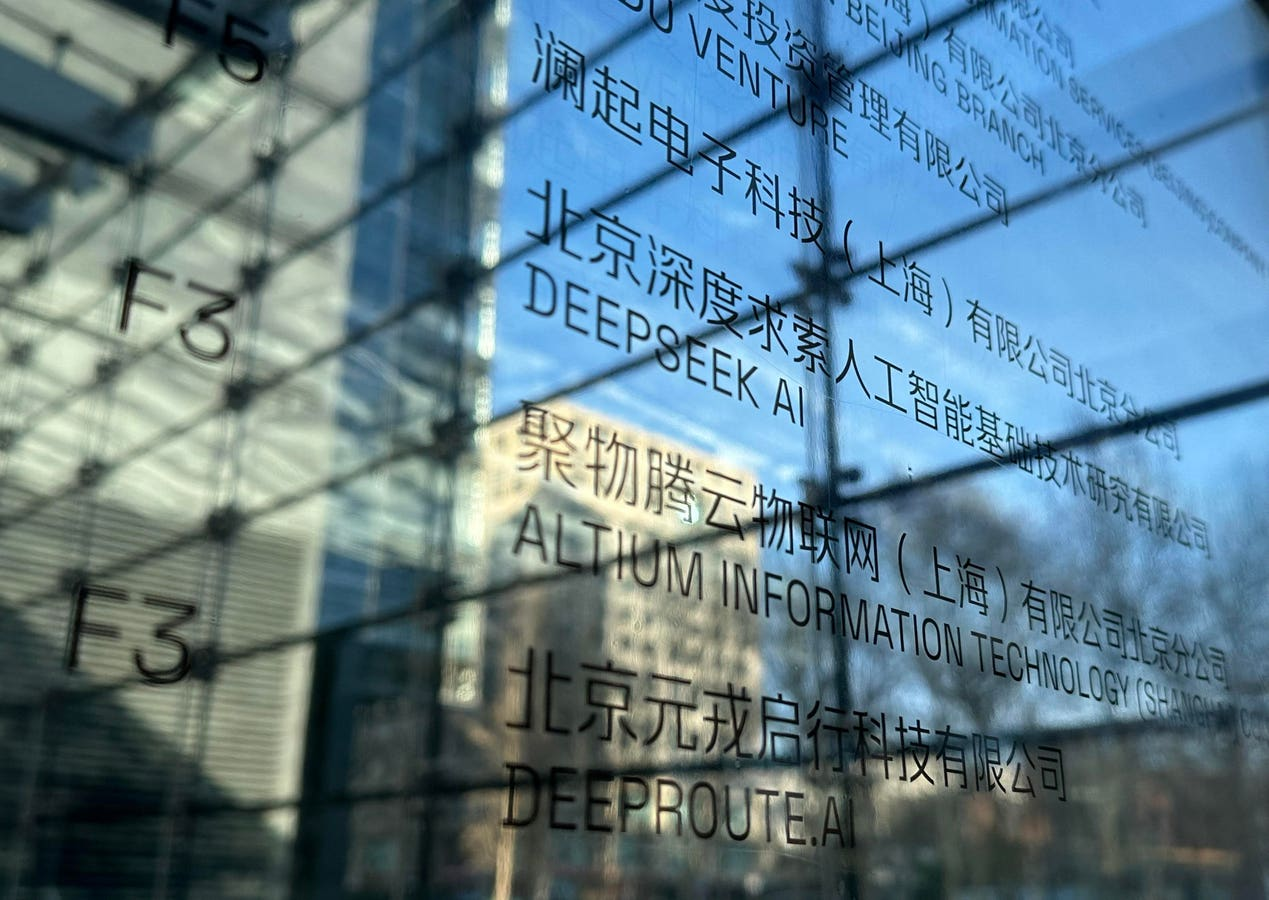

DeepSeek, a Chinese AI company, has announced its DeepSeek-R1 model, claiming it can perform on par with leading AI systems while significantly lowering training costs. This announcement has sparked a notable decline in technology stocks, particularly affecting companies like Nvidia. If DeepSeek’s claims are accurate, this could transform the landscape of AI training, making it accessible to a wider range of enterprises beyond just the largest tech giants.

Key Details

- DeepSeek-R1 was trained using only 2,048 Nvidia H800 GPUs, costing around $5.58 million, a fraction of what is typically needed.

- The company utilized advanced training techniques, such as FP8 precision and modular architecture, to streamline the process.

- While reducing reliance on high-end GPUs, DeepSeek still requires strong supporting infrastructure, such as high-throughput storage and low-latency networking.

- The shift could lead to increased demand for mid-tier GPUs and create opportunities for infrastructure providers like NetApp and Dell Technologies.

Why This Matters

The implications of DeepSeek’s announcement extend beyond just AI training. It represents a significant shift toward democratizing access to AI technologies, potentially expanding the market for various infrastructure providers. Companies like Broadcom and Marvell may benefit from increased demand for networking solutions. For Nvidia, adapting to these changes will be crucial to maintaining its market position, even as it faces competition from emerging players. Ultimately, this development signals a new era in AI infrastructure, emphasizing efficiency, accessibility, and innovation, which could reshape how businesses leverage artificial intelligence.