Understanding the Landscape

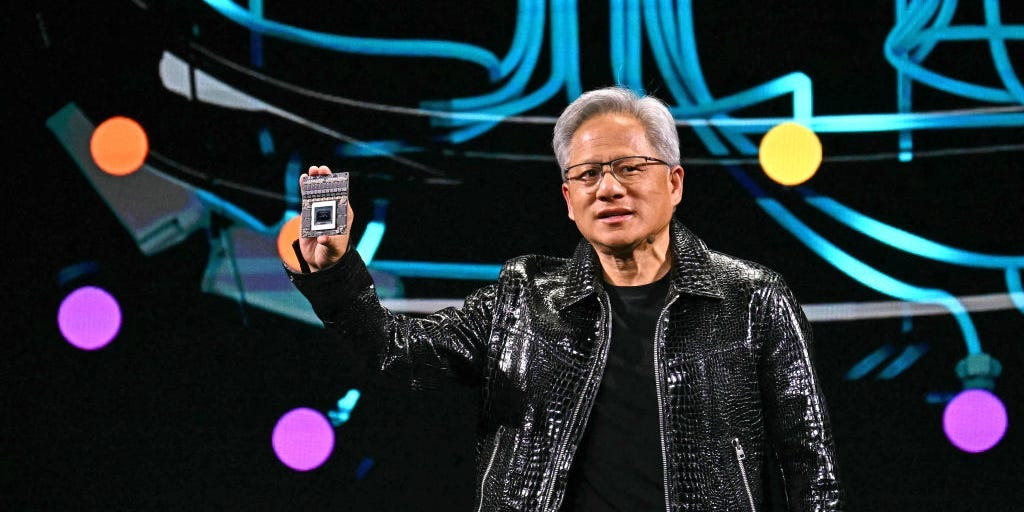

Nvidia’s CEO Jensen Huang recently highlighted the growing demand for computing resources in AI, especially for reasoning models. During an earnings call, he noted that these models require significantly more computing power than traditional models. Despite Nvidia’s strong revenue performance, investor reactions have been muted, largely due to emerging competition in the AI inference space, particularly from the Chinese firm DeepSeek, which has launched efficient open-source models.

Key Insights

- Reasoning models can consume up to 100 times more computing resources than traditional models.

- Nvidia’s current demand is heavily driven by inference, which refines AI models and generates responses.

- Analysts predict increased competition in the inference market, potentially impacting Nvidia’s market share.

- New startups, like Tenstorrent and Etched, have secured substantial funding to develop inference chips, posing a threat to Nvidia’s dominance.

The Bigger Picture

The rise of reasoning models and increased competition in the AI inference market could reshape the landscape for Nvidia. While the company currently leads in AI computing, the emergence of efficient models and custom chips from rivals may challenge its position. Investors are closely monitoring these developments, as they could significantly impact Nvidia’s future growth and market share in the AI sector.