Understanding the Landscape of Generative AI

A recent surge in AI-generated content has raised alarms about its potential for manipulation in conflict zones. Videos with synthetic voiceovers discussing Mali’s politics and military presence illustrate how generative AI can distort public perception. This technology differs from traditional AI by creating new content rather than analyzing existing data. Such advancements can fuel propaganda and misinformation, but they also offer tools for preventing atrocities and improving crisis responses.

Key Insights

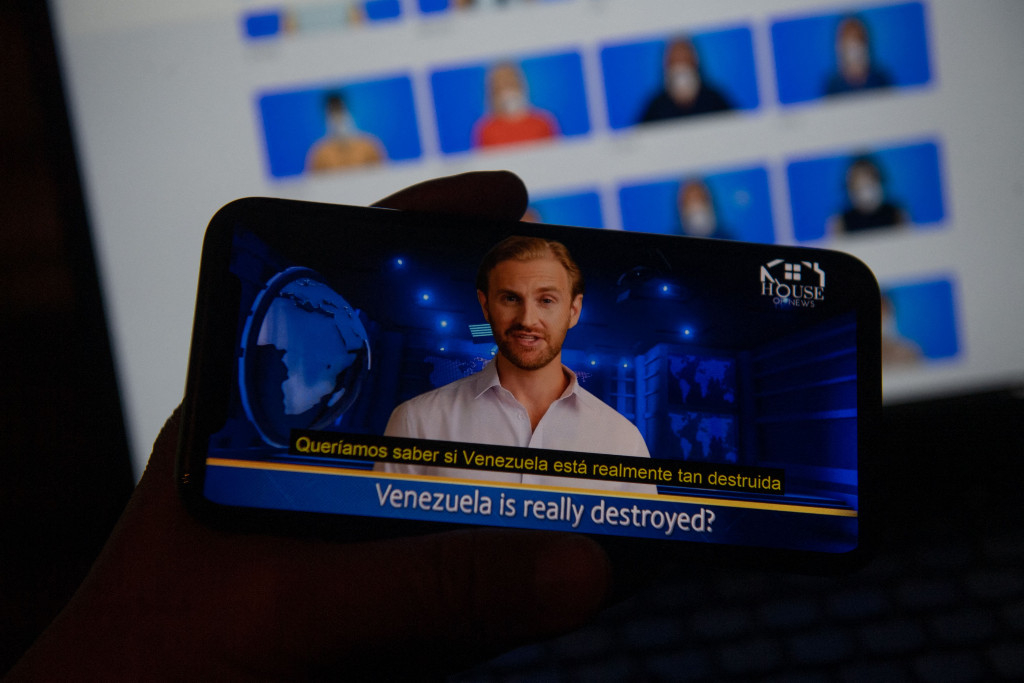

- Generative AI allows for mass production of deceptive content at low costs, as seen in Venezuela’s use of AI-generated news anchors.

- Enhanced personalization enables targeted propaganda, exemplified by deepfake calls impersonating political figures in the U.S.

- Compositional deepfakes can manipulate historical narratives by embedding false events into real timelines.

- The “liar’s dividend” phenomenon allows genuine evidence to be dismissed as fake, complicating accountability in conflicts.

The Bigger Picture

The risks associated with generative AI are significant, but its potential to aid in atrocity prevention is equally compelling. By processing extensive data rapidly, AI can enhance early warning systems and optimize humanitarian responses. However, realizing these benefits requires robust international cooperation and legal frameworks to ensure responsible use. As generative AI continues to evolve, the focus must be on balancing innovation with human rights and community engagement to navigate its complexities effectively.