Unveiling a Disturbing Discovery

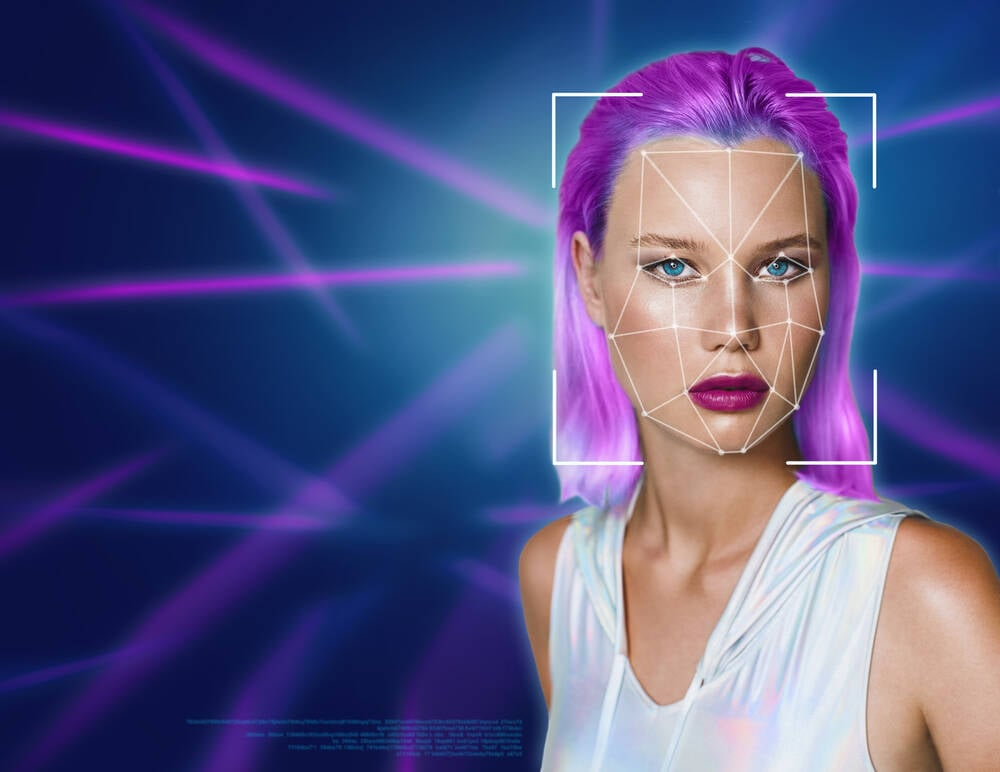

A researcher recently uncovered a significant breach involving an unprotected Amazon Web Services S3 bucket. This bucket contained nearly 94,000 explicit AI-generated images, including disturbing depictions of children and celebrities. The images were linked to a South Korean company, AI-NOMIS, and its web app, GenNomis. After the researcher alerted the company, the bucket and associated websites were quickly taken offline, raising concerns about data security and ethical use of AI technology.

Key Findings

- The exposed bucket contained 93,485 images and JSON files logging user prompts.

- Users could generate explicit content, including child-like depictions, through prompts.

- GenNomis claimed to prohibit such content, but enforcement of these rules is questionable.

- The incident highlights the lack of security and moderation in AI image generation platforms.

The Bigger Picture

This discovery sparks a crucial conversation about the responsibility of AI developers to ensure their technologies are not misused. With increasing instances of AI-generated explicit content, regulatory bodies worldwide are taking action. Governments are moving to criminalize the creation and distribution of non-consensual deepfake images. As the demand for such content persists, it is vital for companies to implement robust safeguards and for legislation to catch up with technological advancements. The ethical implications of AI-generated content must be addressed to protect individuals from harm and exploitation.