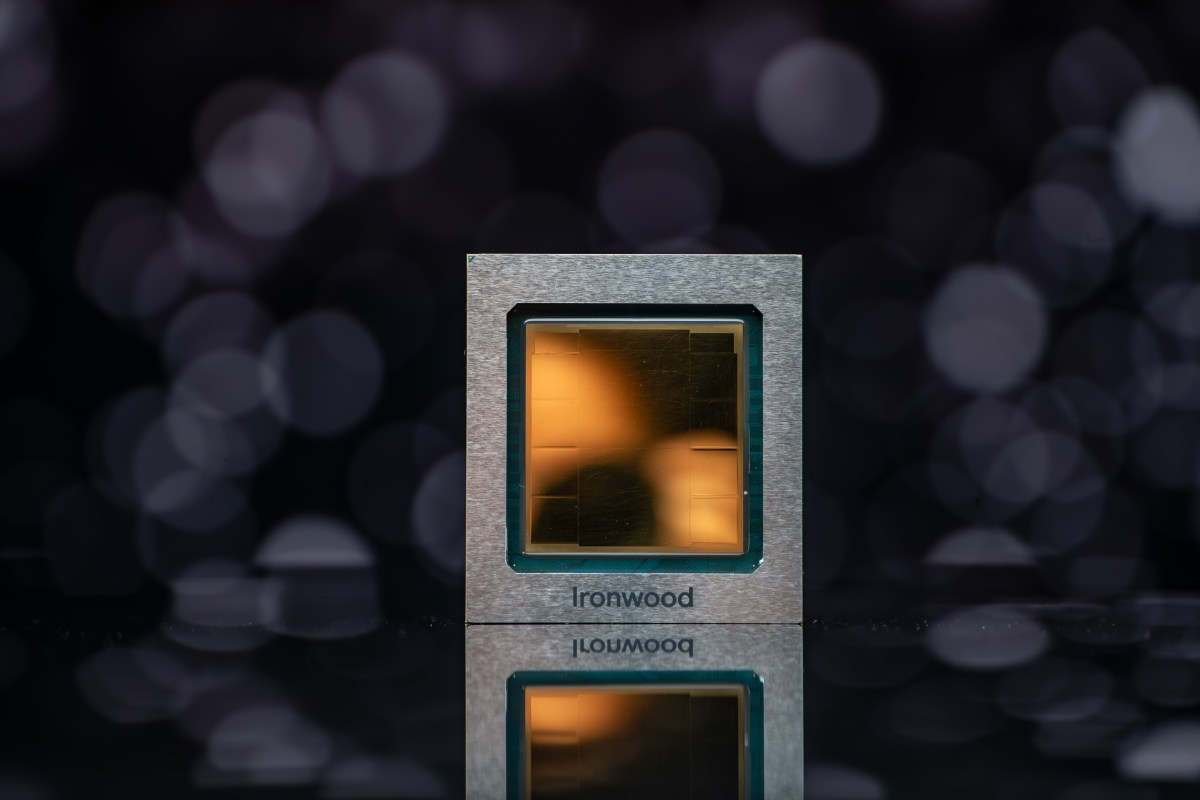

Overview of Ironwood’s Capabilities

Google has introduced Ironwood, its seventh-generation TPU AI accelerator chip, during the Cloud Next conference. This new chip is designed specifically for running AI models, marking a significant step in AI technology. Ironwood will be available later this year for Google Cloud customers in two configurations: a 256-chip cluster and a larger 9,216-chip cluster. The chip is built to enhance the performance of inferential AI models, making it a strong competitor in the growing AI accelerator market.

Key Features of Ironwood

- Ironwood achieves an impressive peak performance of 4,614 TFLOPs.

- Each chip is equipped with 192GB of dedicated RAM and has a bandwidth of nearly 7.4 Tbps.

- The chip includes a specialized core called SparseCore, optimized for handling advanced ranking and recommendation tasks.

- Its architecture reduces data movement and latency, leading to improved energy efficiency.

The Importance of Ironwood in AI Development

The introduction of Ironwood is crucial as competition among tech giants intensifies in the AI accelerator field. With companies like Nvidia, Amazon, and Microsoft also developing their own solutions, Ironwood aims to provide a superior option for businesses looking to leverage AI at scale. This advancement is not just about speed; it’s also about efficiency and reliability, which are vital for the future of AI applications. Ironwood is expected to play a pivotal role in powering innovative AI solutions across various industries.