Understanding the Research

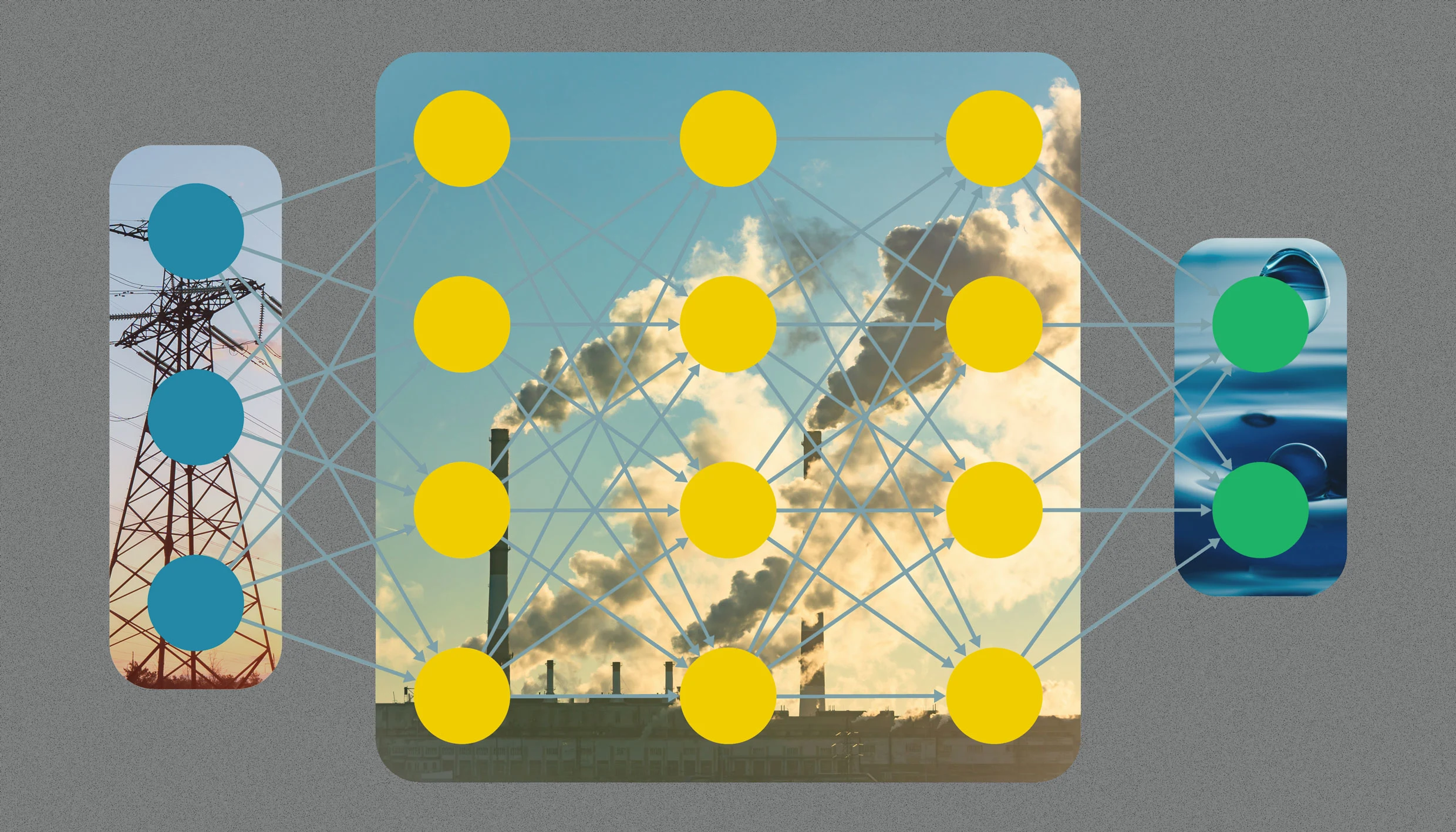

A recent study sheds light on the environmental effects of AI models, providing clearer insights into their energy consumption and carbon footprint. Researchers from various universities have created a benchmark that evaluates the environmental impact of 30 popular AI models. By analyzing data from public APIs and power grids, they calculated the eco-efficiency of these models. This study is crucial as it highlights the hidden costs of AI usage, which are often overlooked.

Key Findings

- OpenAI’s o3 model and DeepSeek’s reasoning model use over 33 watt-hours for long answers, significantly more than smaller models like GPT-4.1 nano.

- Claude-3.7 Sonnet is identified as the most eco-efficient model, showcasing the importance of hardware in energy consumption.

- Longer queries result in higher energy use; even short prompts have a significant impact.

- Daily usage of GPT-4o could lead to an annual energy consumption of 392 to 463 gigawatt hours, enough to power 35,000 homes.

The Bigger Picture

The findings underline a growing concern about the environmental costs associated with AI. As AI adoption increases, the cumulative impact can be substantial. For instance, using ChatGPT-4o for a year consumes as much water as needed by 1.2 million people. This research calls for more transparency and responsibility from AI developers regarding their environmental footprint. Understanding these impacts is essential for creating sustainable AI practices in the future.