Understanding the Breakthrough

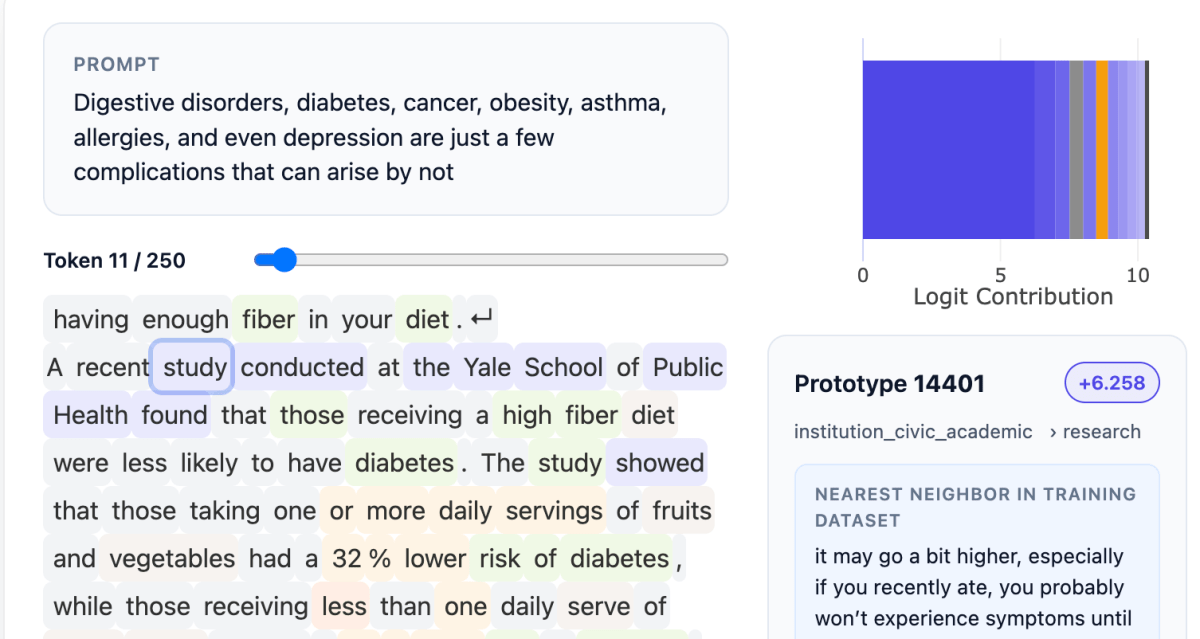

Guide Labs, a San Francisco startup, has introduced Steerling-8B, an innovative 8-billion-parameter large language model (LLM). This model aims to tackle the often-misunderstood nature of deep learning models by making their actions more interpretable. Unlike traditional models, Steerling-8B allows users to trace every output back to its training data origins. This transparency is crucial for understanding how models interpret complex concepts like humor, gender, and factual references.

Key Features of Steerling-8B

- The model employs a new architecture that categorizes data into traceable groups, enhancing interpretability.

- Developed by CEO Julius Adebayo and chief science officer Aya Abdelsalam Ismail, it builds on Adebayo’s previous research at MIT.

- Steerling-8B is designed to maintain emergent behaviors, allowing it to generalize knowledge even beyond its training.

- The model is said to achieve 90% of the performance of larger models while requiring less training data, indicating efficiency in its design.

The Importance of Interpretability

The need for interpretable AI is growing, especially in regulated industries like finance and healthcare, where understanding model decisions is vital. Adebayo believes that making AI more transparent can prevent misuse, such as blocking copyrighted content or managing sensitive topics like violence. The push for interpretable models is not just about enhancing performance; it’s about ensuring that AI systems remain accountable and understandable as they become more integrated into society. This shift could lead to more responsible AI development, ultimately benefiting humanity in the long run.